This is the multi-page printable view of this section. Click here to print.

Documentation

- 1: Allocation Assignments

- 1.1: Getting Started

- 1.1.1: Allocations Quick Start

- 1.1.2: Rule Based Tagging

- 1.1.3: Why are Allocations Useful

- 1.2: Configure Allocations

- 1.2.1: Configure an Allocation

- 1.2.2: Recursive Allocations

- 1.3: Results and Troubleshooting

- 1.3.1: Allocation Results

- 1.3.2: Troubleshooting Allocations

- 2: Custom App Sandbox

- 3: Dashboards

- 3.1: Learning About Dashboards

- 3.2: Using Dashboards

- 3.3: Formatting Numbers and Other Data Types

- 3.4: Example Calculated Columns

- 3.5: Example Metrics

- 4: Data and Service Connectors

- 4.1: Cloud Service Connections

- 4.1.1: Quandl Connector

- 4.2: Database and Data Lake Connections

- 4.2.1: Amazon Athena

- 4.2.2: Amazon Redshift

- 4.2.3: Apache Doris

- 4.2.4: Apache Hive

- 4.2.5: Apache Spark

- 4.2.6: Azure Databricks

- 4.2.7: Databend

- 4.2.8: Exasol

- 4.2.9: Greenplum

- 4.2.10: IBM DB2

- 4.2.11: IBM Informix

- 4.2.12: Microsoft Fabric

- 4.2.13: Microsoft SQL Server

- 4.2.14: MySQL

- 4.2.15: ODBC

- 4.2.16: Oracle

- 4.2.17: PlaidCloud Lakehouse

- 4.2.18: PostgreSQL

- 4.2.19: Presto

- 4.2.20: SAP HANA

- 4.2.21: Snowflake

- 4.2.22: StarRocks

- 4.2.23: Trino

- 4.3: ERP System Connections

- 4.3.1: Infor Connector

- 4.3.2: JD Edwards (Legacy) Connector

- 4.3.3: Oracle EBS Connector

- 4.3.4: Oracle Fusion Connector

- 4.3.5: SAP Analytics Cloud Connector

- 4.3.6: SAP ECC Connector

- 4.3.7: SAP Profitability and Cost Management (PCM) Connector

- 4.3.8: SAP Profitability and Performance Management (PaPM) Connector

- 4.3.9: SAP S/4HANA Connector

- 4.4: Git Repository Connections

- 4.4.1: AWS CodeCommit Repository Connector

- 4.4.2: Azure Repos Repository Connector

- 4.4.3: BitBucket Repository Connector

- 4.4.4: GitHub Repository Connector

- 4.4.5: GitLab Repository Connector

- 4.5: Google Service Connections

- 4.5.1: Google BigQuery Connector

- 4.5.2: Google Sheets

- 4.6: Open Table Format Connections

- 4.6.1: Apache Hive Open Table Format

- 4.6.2: Apache Hudi Open Table Format

- 4.6.3: Apache Iceberg Open Table Format

- 4.6.4: Delta Lake Open Table Format (Databricks Catalog)

- 4.7: REST Connections

- 4.7.1: Gusto REST Connector

- 4.7.2: Microsoft Dynamics 365 REST Connector

- 4.7.3: Mulesoft REST Connector

- 4.7.4: Netsuite REST Connector

- 4.7.5: Paycor REST Connector

- 4.7.6: Quickbooks REST Connector

- 4.7.7: Ramp REST Connector

- 4.7.8: Sage Intacct REST Connector

- 4.7.9: Salesforce REST Connector

- 4.7.10: Stripe REST Connector

- 4.7.11: Workday REST Connector

- 4.8: Team Collaboration Connections

- 4.8.1: Microsoft Teams Connector

- 4.8.2: Slack Connector

- 5: Data Lakehouse Service

- 5.1: Getting Started

- 5.2: Pricing

- 6: Data Management - Dimensions

- 6.1: Dimension Functions for Expressions and Aggregations

- 6.2: Loading and Unloading Dimensions

- 6.3: Using Dimensions (Hierarchies)

- 6.4: Using Dimensions (Hierarchies)

- 7: Data Management - Tabular

- 7.1: Using Tables and Views

- 7.2: Table Explorer

- 7.3: Publishing Tables

- 8: Expressions

- 8.1: Aggregate Functions

- 8.1.1: ANY

- 8.1.2: APPROX_COUNT_DISTINCT

- 8.1.3: ARG_MAX

- 8.1.4: ARG_MIN

- 8.1.5: ARRAY_AGG

- 8.1.6: AVG

- 8.1.7: AVG_IF

- 8.1.8: COUNT

- 8.1.9: COUNT_DISTINCT

- 8.1.10: COUNT_IF

- 8.1.11: COVAR_POP

- 8.1.12: COVAR_SAMP

- 8.1.13: GROUP_ARRAY_MOVING_AVG

- 8.1.14: GROUP_ARRAY_MOVING_SUM

- 8.1.15: HISTOGRAM

- 8.1.16: JSON_ARRAY_AGG

- 8.1.17: JSON_OBJECT_AGG

- 8.1.18: KURTOSIS

- 8.1.19: MAX

- 8.1.20: MAX_IF

- 8.1.21: MEDIAN

- 8.1.22: MEDIAN_TDIGEST

- 8.1.23: MIN

- 8.1.24: MIN_IF

- 8.1.25: QUANTILE_CONT

- 8.1.26: QUANTILE_DISC

- 8.1.27: QUANTILE_TDIGEST

- 8.1.28: QUANTILE_TDIGEST_WEIGHTED

- 8.1.29: RETENTION

- 8.1.30: SKEWNESS

- 8.1.31: STDDEV_POP

- 8.1.32: STDDEV_SAMP

- 8.1.33: STRING_AGG

- 8.1.34: SUM

- 8.1.35: SUM_IF

- 8.1.36: WINDOW_FUNNEL

- 8.2: AI Functions

- 8.2.1: AI_EMBEDDING_VECTOR

- 8.2.2: AI_TEXT_COMPLETION

- 8.2.3: AI_TO_SQL

- 8.2.4: COSINE_DISTANCE

- 8.3: Array Functions

- 8.3.1: ARRAY_AGGREGATE

- 8.3.2: ARRAY_APPEND

- 8.3.3: ARRAY_APPLY

- 8.3.4: ARRAY_CONCAT

- 8.3.5: ARRAY_CONTAINS

- 8.3.6: ARRAY_DISTINCT

- 8.3.7: ARRAY_FILTER

- 8.3.8: ARRAY_FLATTEN

- 8.3.9: ARRAY_GET

- 8.3.10: ARRAY_INDEXOF

- 8.3.11: ARRAY_LENGTH

- 8.3.12: ARRAY_PREPEND

- 8.3.13: ARRAY_REDUCE

- 8.3.14: ARRAY_REMOVE_FIRST

- 8.3.15: ARRAY_REMOVE_LAST

- 8.3.16: ARRAY_SIZE

- 8.3.17: ARRAY_SLICE

- 8.3.18: ARRAY_SORT

- 8.3.19: ARRAY_TO_STRING

- 8.3.20: ARRAY_TRANSFORM

- 8.3.21: ARRAY_UNIQUE

- 8.3.22: ARRAYS_ZIP

- 8.3.23: CONTAINS

- 8.3.24: GET

- 8.3.25: RANGE

- 8.3.26: SLICE

- 8.3.27: UNNEST

- 8.4: Bitmap Functions

- 8.4.1: BITMAP_AND

- 8.4.2: BITMAP_AND_COUNT

- 8.4.3: BITMAP_AND_NOT

- 8.4.4: BITMAP_CARDINALITY

- 8.4.5: BITMAP_CONTAINS

- 8.4.6: BITMAP_COUNT

- 8.4.7: BITMAP_HAS_ALL

- 8.4.8: BITMAP_HAS_ANY

- 8.4.9: BITMAP_INTERSECT

- 8.4.10: BITMAP_MAX

- 8.4.11: BITMAP_MIN

- 8.4.12: BITMAP_NOT

- 8.4.13: BITMAP_NOT_COUNT

- 8.4.14: BITMAP_OR

- 8.4.15: BITMAP_OR_COUNT

- 8.4.16: BITMAP_SUBSET_IN_RANGE

- 8.4.17: BITMAP_SUBSET_LIMIT

- 8.4.18: BITMAP_UNION

- 8.4.19: BITMAP_XOR

- 8.4.20: BITMAP_XOR_COUNT

- 8.4.21: INTERSECT_COUNT

- 8.4.22: SUB_BITMAP

- 8.5: Conditional Functions

- 8.5.1: [ NOT ] BETWEEN

- 8.5.2: [ NOT ] IN

- 8.5.3: AND

- 8.5.4: CASE

- 8.5.5: COALESCE

- 8.5.6: Comparison Methods

- 8.5.7: ERROR_OR

- 8.5.8: GREATEST

- 8.5.9: IF

- 8.5.10: IFNULL

- 8.5.11: IS [ NOT ] DISTINCT FROM

- 8.5.12: IS_ERROR

- 8.5.13: IS_NOT_ERROR

- 8.5.14: IS_NOT_NULL

- 8.5.15: IS_NULL

- 8.5.16: LEAST

- 8.5.17: NULLIF

- 8.5.18: NVL

- 8.5.19: NVL2

- 8.5.20: OR

- 8.6: Context Functions

- 8.6.1: CONNECTION_ID

- 8.6.2: CURRENT_CATALOG

- 8.6.3: CURRENT_USER

- 8.6.4: DATABASE

- 8.6.5: LAST_QUERY_ID

- 8.6.6: VERSION

- 8.7: Conversion Functions

- 8.7.1: BUILD_BITMAP

- 8.7.2: CAST, ::

- 8.7.3: TO_BINARY

- 8.7.4: TO_BITMAP

- 8.7.5: TO_BOOLEAN

- 8.7.6: TO_FLOAT32

- 8.7.7: TO_FLOAT64

- 8.7.8: TO_HEX

- 8.7.9: TO_INT16

- 8.7.10: TO_INT32

- 8.7.11: TO_INT64

- 8.7.12: TO_INT8

- 8.7.13: TO_STRING

- 8.7.14: TO_TEXT

- 8.7.15: TO_UINT16

- 8.7.16: TO_UINT32

- 8.7.17: TO_UINT64

- 8.7.18: TO_UINT8

- 8.7.19: TO_VARCHAR

- 8.7.20: TO_VARIANT

- 8.7.21: TRY_CAST

- 8.7.22: TRY_TO_BINARY

- 8.8: Date & Time Functions

- 8.8.1: ADD TIME INTERVAL

- 8.8.2: CURRENT_TIMESTAMP

- 8.8.3: DATE

- 8.8.4: DATE DIFF

- 8.8.5: DATE_ADD

- 8.8.6: DATE_FORMAT

- 8.8.7: DATE_PART

- 8.8.8: DATE_SUB

- 8.8.9: DATE_TRUNC

- 8.8.10: DAY

- 8.8.11: EXTRACT

- 8.8.12: LAST_DAY

- 8.8.13: MONTH

- 8.8.14: MONTHS_BETWEEN

- 8.8.15: NEXT_DAY

- 8.8.16: NOW

- 8.8.17: PREVIOUS_DAY

- 8.8.18: QUARTER

- 8.8.19: STR_TO_DATE

- 8.8.20: STR_TO_TIMESTAMP

- 8.8.21: SUBTRACT TIME INTERVAL

- 8.8.22: TIME_SLOT

- 8.8.23: TIMESTAMP_DIFF

- 8.8.24: TIMEZONE

- 8.8.25: TO_DATE

- 8.8.26: TO_DATETIME

- 8.8.27: TO_DAY_OF_MONTH

- 8.8.28: TO_DAY_OF_WEEK

- 8.8.29: TO_DAY_OF_YEAR

- 8.8.30: TO_HOUR

- 8.8.31: TO_MINUTE

- 8.8.32: TO_MONDAY

- 8.8.33: TO_MONTH

- 8.8.34: TO_QUARTER

- 8.8.35: TO_SECOND

- 8.8.36: TO_START_OF_DAY

- 8.8.37: TO_START_OF_FIFTEEN_MINUTES

- 8.8.38: TO_START_OF_FIVE_MINUTES

- 8.8.39: TO_START_OF_HOUR

- 8.8.40: TO_START_OF_ISO_YEAR

- 8.8.41: TO_START_OF_MINUTE

- 8.8.42: TO_START_OF_MONTH

- 8.8.43: TO_START_OF_QUARTER

- 8.8.44: TO_START_OF_SECOND

- 8.8.45: TO_START_OF_TEN_MINUTES

- 8.8.46: TO_START_OF_WEEK

- 8.8.47: TO_START_OF_YEAR

- 8.8.48: TO_TIMESTAMP

- 8.8.49: TO_UNIX_TIMESTAMP

- 8.8.50: TO_WEEK_OF_YEAR

- 8.8.51: TO_YEAR

- 8.8.52: TO_YYYYMM

- 8.8.53: TO_YYYYMMDD

- 8.8.54: TO_YYYYMMDDHH

- 8.8.55: TO_YYYYMMDDHHMMSS

- 8.8.56: TODAY

- 8.8.57: TOMORROW

- 8.8.58: TRY_TO_DATETIME

- 8.8.59: TRY_TO_TIMESTAMP

- 8.8.60: WEEK

- 8.8.61: WEEKOFYEAR

- 8.8.62: YEAR

- 8.8.63: YESTERDAY

- 8.9: Dictionary Functions

- 8.9.1: DICT_GET

- 8.10: Geography Functions

- 8.10.1: GEO_TO_H3

- 8.10.2: GEOHASH_DECODE

- 8.10.3: GEOHASH_ENCODE

- 8.10.4: H3_CELL_AREA_M2

- 8.10.5: H3_CELL_AREA_RADS2

- 8.10.6: H3_DISTANCE

- 8.10.7: H3_EDGE_ANGLE

- 8.10.8: H3_EDGE_LENGTH_KM

- 8.10.9: H3_EDGE_LENGTH_M

- 8.10.10: H3_EXACT_EDGE_LENGTH_KM

- 8.10.11: H3_EXACT_EDGE_LENGTH_M

- 8.10.12: H3_EXACT_EDGE_LENGTH_RADS

- 8.10.13: H3_GET_BASE_CELL

- 8.10.14: H3_GET_DESTINATION_INDEX_FROM_UNIDIRECTIONAL_EDGE

- 8.10.15: H3_GET_FACES

- 8.10.16: H3_GET_INDEXES_FROM_UNIDIRECTIONAL_EDGE

- 8.10.17: H3_GET_ORIGIN_INDEX_FROM_UNIDIRECTIONAL_EDGE

- 8.10.18: H3_GET_RESOLUTION

- 8.10.19: H3_GET_UNIDIRECTIONAL_EDGE

- 8.10.20: H3_GET_UNIDIRECTIONAL_EDGE_BOUNDARY

- 8.10.21: H3_GET_UNIDIRECTIONAL_EDGES_FROM_HEXAGON

- 8.10.22: H3_HEX_AREA_KM2

- 8.10.23: H3_HEX_AREA_M2

- 8.10.24: H3_HEX_RING

- 8.10.25: H3_INDEXES_ARE_NEIGHBORS

- 8.10.26: H3_IS_PENTAGON

- 8.10.27: H3_IS_RES_CLASS_III

- 8.10.28: H3_IS_VALID

- 8.10.29: H3_K_RING

- 8.10.30: H3_LINE

- 8.10.31: H3_NUM_HEXAGONS

- 8.10.32: H3_TO_CENTER_CHILD

- 8.10.33: H3_TO_CHILDREN

- 8.10.34: H3_TO_GEO

- 8.10.35: H3_TO_GEO_BOUNDARY

- 8.10.36: H3_TO_PARENT

- 8.10.37: H3_TO_STRING

- 8.10.38: H3_UNIDIRECTIONAL_EDGE_IS_VALID

- 8.10.39: POINT_IN_POLYGON

- 8.10.40: STRING_TO_H3

- 8.11: Geometry Functions

- 8.11.1: HAVERSINE

- 8.11.2: ST_ASBINARY

- 8.11.3: ST_ASEWKB

- 8.11.4: ST_ASEWKT

- 8.11.5: ST_ASGEOJSON

- 8.11.6: ST_ASTEXT

- 8.11.7: ST_ASWKB

- 8.11.8: ST_ASWKT

- 8.11.9: ST_CONTAINS

- 8.11.10: ST_DIMENSION

- 8.11.11: ST_DISTANCE

- 8.11.12: ST_ENDPOINT

- 8.11.13: ST_GEOHASH

- 8.11.14: ST_GEOM_POINT

- 8.11.15: ST_GEOMETRYFROMEWKB

- 8.11.16: ST_GEOMETRYFROMEWKT

- 8.11.17: ST_GEOMETRYFROMTEXT

- 8.11.18: ST_GEOMETRYFROMWKB

- 8.11.19: ST_GEOMETRYFROMWKT

- 8.11.20: ST_GEOMFROMEWKB

- 8.11.21: ST_GEOMFROMEWKT

- 8.11.22: ST_GEOMFROMGEOHASH

- 8.11.23: ST_GEOMFROMTEXT

- 8.11.24: ST_GEOMFROMWKB

- 8.11.25: ST_GEOMFROMWKT

- 8.11.26: ST_GEOMPOINTFROMGEOHASH

- 8.11.27: ST_LENGTH

- 8.11.28: ST_MAKE_LINE

- 8.11.29: ST_MAKEGEOMPOINT

- 8.11.30: ST_MAKELINE

- 8.11.31: ST_MAKEPOLYGON

- 8.11.32: ST_NPOINTS

- 8.11.33: ST_NUMPOINTS

- 8.11.34: ST_POINTN

- 8.11.35: ST_POLYGON

- 8.11.36: ST_SETSRID

- 8.11.37: ST_SRID

- 8.11.38: ST_STARTPOINT

- 8.11.39: ST_TRANSFORM

- 8.11.40: ST_X

- 8.11.41: ST_XMAX

- 8.11.42: ST_XMIN

- 8.11.43: ST_Y

- 8.11.44: ST_YMAX

- 8.11.45: ST_YMIN

- 8.11.46: TO_GEOMETRY

- 8.11.47: TO_STRING

- 8.12: Hash Functions

- 8.12.1: BLAKE3

- 8.12.2: CITY64WITHSEED

- 8.12.3: MD5

- 8.12.4: SHA

- 8.12.5: SHA1

- 8.12.6: SHA2

- 8.12.7: SIPHASH

- 8.12.8: SIPHASH64

- 8.12.9: XXHASH32

- 8.12.10: XXHASH64

- 8.13: Interval Functions

- 8.13.1: EPOCH

- 8.13.2: TO_CENTURIES

- 8.13.3: TO_DAYS

- 8.13.4: TO_DECADES

- 8.13.5: TO_HOURS

- 8.13.6: TO_MICROSECONDS

- 8.13.7: TO_MILLENNIA

- 8.13.8: TO_MILLISECONDS

- 8.13.9: TO_MINUTES

- 8.13.10: TO_MONTHS

- 8.13.11: TO_SECONDS

- 8.13.12: TO_WEEKS

- 8.13.13: TO_YEARS

- 8.14: IP Address Functions

- 8.14.1: INET_ATON

- 8.14.2: INET_NTOA

- 8.14.3: IPV4_NUM_TO_STRING

- 8.14.4: IPV4_STRING_TO_NUM

- 8.14.5: TRY_INET_ATON

- 8.14.6: TRY_INET_NTOA

- 8.14.7: TRY_IPV4_NUM_TO_STRING

- 8.14.8: TRY_IPV4_STRING_TO_NUM

- 8.15: Map Functions

- 8.15.1: MAP_CAT

- 8.15.2: MAP_CONTAINS_KEY

- 8.15.3: MAP_DELETE

- 8.15.4: MAP_FILTER

- 8.15.5: MAP_INSERT

- 8.15.6: MAP_KEYS

- 8.15.7: MAP_PICK

- 8.15.8: MAP_SIZE

- 8.15.9: MAP_TRANSFORM_KEYS

- 8.15.10: MAP_TRANSFORM_VALUES

- 8.15.11: MAP_VALUES

- 8.16: Numeric Functions

- 8.16.1: ABS

- 8.16.2: ACOS

- 8.16.3: ADD

- 8.16.4: ASIN

- 8.16.5: ATAN

- 8.16.6: ATAN2

- 8.16.7: CBRT

- 8.16.8: CEIL

- 8.16.9: CEILING

- 8.16.10: COS

- 8.16.11: COT

- 8.16.12: CRC32

- 8.16.13: DEGREES

- 8.16.14: DIV

- 8.16.15: DIV0

- 8.16.16: DIVNULL

- 8.16.17: EXP

- 8.16.18: FACTORIAL

- 8.16.19: FLOOR

- 8.16.20: INTDIV

- 8.16.21: LN

- 8.16.22: LOG(b, x)

- 8.16.23: LOG(x)

- 8.16.24: LOG10

- 8.16.25: LOG2

- 8.16.26: MINUS

- 8.16.27: MOD

- 8.16.28: MODULO

- 8.16.29: NEG

- 8.16.30: NEGATE

- 8.16.31: PI

- 8.16.32: PLUS

- 8.16.33: POW

- 8.16.34: POWER

- 8.16.35: RADIANS

- 8.16.36: RAND()

- 8.16.37: RAND(n)

- 8.16.38: ROUND

- 8.16.39: SIGN

- 8.16.40: SIN

- 8.16.41: SQRT

- 8.16.42: SUBTRACT

- 8.16.43: TAN

- 8.16.44: TRUNCATE

- 8.17: Other Functions

- 8.17.1: ASSUME_NOT_NULL

- 8.17.2: EXISTS

- 8.17.3: GROUPING

- 8.17.4: HUMANIZE_NUMBER

- 8.17.5: HUMANIZE_SIZE

- 8.17.6: IGNORE

- 8.17.7: REMOVE_NULLABLE

- 8.17.8: TO_NULLABLE

- 8.17.9: TYPEOF

- 8.18: Search Functions

- 8.19: Semi-Structured Functions

- 8.19.1: AS_<type>

- 8.19.2: CHECK_JSON

- 8.19.3: FLATTEN

- 8.19.4: GET

- 8.19.5: GET_IGNORE_CASE

- 8.19.6: GET_PATH

- 8.19.7: IS_ARRAY

- 8.19.8: IS_BOOLEAN

- 8.19.9: IS_FLOAT

- 8.19.10: IS_INTEGER

- 8.19.11: IS_NULL_VALUE

- 8.19.12: IS_OBJECT

- 8.19.13: IS_STRING

- 8.19.14: JQ

- 8.19.15: JSON_ARRAY

- 8.19.16: JSON_ARRAY_APPLY

- 8.19.17: JSON_ARRAY_DISTINCT

- 8.19.18: JSON_ARRAY_ELEMENTS

- 8.19.19: JSON_ARRAY_EXCEPT

- 8.19.20: JSON_ARRAY_FILTER

- 8.19.21: JSON_ARRAY_INSERT

- 8.19.22: JSON_ARRAY_INTERSECTION

- 8.19.23: JSON_ARRAY_MAP

- 8.19.24: JSON_ARRAY_OVERLAP

- 8.19.25: JSON_ARRAY_REDUCE

- 8.19.26: JSON_ARRAY_TRANSFORM

- 8.19.27: JSON_EACH

- 8.19.28: JSON_EXTRACT_PATH_TEXT

- 8.19.29: JSON_MAP_FILTER

- 8.19.30: JSON_MAP_TRANSFORM_KEYS

- 8.19.31: JSON_MAP_TRANSFORM_VALUES

- 8.19.32: JSON_OBJECT_DELETE

- 8.19.33: JSON_OBJECT_INSERT

- 8.19.34: JSON_OBJECT_KEEP_NULL

- 8.19.35: JSON_OBJECT_KEYS

- 8.19.36: JSON_OBJECT_PICK

- 8.19.37: JSON_PATH_EXISTS

- 8.19.38: JSON_PATH_MATCH

- 8.19.39: JSON_PATH_QUERY

- 8.19.40: JSON_PATH_QUERY_ARRAY

- 8.19.41: JSON_PATH_QUERY_FIRST

- 8.19.42: JSON_PRETTY

- 8.19.43: JSON_STRIP_NULLS

- 8.19.44: JSON_TO_STRING

- 8.19.45: JSON_TYPEOF

- 8.19.46: OBJECT_KEYS

- 8.19.47: PARSE_JSON

- 8.20: Sequence Functions

- 8.20.1: NEXTVAL

- 8.21: String Functions

- 8.21.1: ASCII

- 8.21.2: BIN

- 8.21.3: BIT_LENGTH

- 8.21.4: CHAR

- 8.21.5: CHAR_LENGTH

- 8.21.6: CHARACTER_LENGTH

- 8.21.7: CONCAT

- 8.21.8: CONCAT_WS

- 8.21.9: FROM_BASE64

- 8.21.10: FROM_HEX

- 8.21.11: HEX

- 8.21.12: INSERT

- 8.21.13: INSTR

- 8.21.14: JARO_WINKLER

- 8.21.15: LCASE

- 8.21.16: LEFT

- 8.21.17: LENGTH

- 8.21.18: LENGTH_UTF8

- 8.21.19: LIKE

- 8.21.20: LOCATE

- 8.21.21: LOWER

- 8.21.22: LPAD

- 8.21.23: LTRIM

- 8.21.24: MID

- 8.21.25: NOT LIKE

- 8.21.26: NOT REGEXP

- 8.21.27: NOT RLIKE

- 8.21.28: OCT

- 8.21.29: OCTET_LENGTH

- 8.21.30: ORD

- 8.21.31: POSITION

- 8.21.32: QUOTE

- 8.21.33: REGEXP

- 8.21.34: REGEXP_INSTR

- 8.21.35: REGEXP_LIKE

- 8.21.36: REGEXP_REPLACE

- 8.21.37: REGEXP_SUBSTR

- 8.21.38: REPEAT

- 8.21.39: REPLACE

- 8.21.40: REVERSE

- 8.21.41: RIGHT

- 8.21.42: RLIKE

- 8.21.43: RPAD

- 8.21.44: RTRIM

- 8.21.45: SOUNDEX

- 8.21.46: SOUNDS LIKE

- 8.21.47: SPACE

- 8.21.48: SPLIT

- 8.21.49: SPLIT_PART

- 8.21.50: STRCMP

- 8.21.51: SUBSTR

- 8.21.52: SUBSTRING

- 8.21.53: TO_BASE64

- 8.21.54: TRANSLATE

- 8.21.55: TRIM

- 8.21.56: TRIM_BOTH

- 8.21.57: TRIM_LEADING

- 8.21.58: TRIM_TRAILING

- 8.21.59: UCASE

- 8.21.60: UNHEX

- 8.21.61: UPPER

- 8.22: System Functions

- 8.22.1: CLUSTERING_INFORMATION

- 8.22.2: FUSE_BLOCK

- 8.22.3: FUSE_COLUMN

- 8.22.4: FUSE_ENCODING

- 8.22.5: FUSE_SEGMENT

- 8.22.6: FUSE_SNAPSHOT

- 8.22.7: FUSE_STATISTIC

- 8.22.8: FUSE_TIME_TRAVEL_SIZE

- 8.23: Table Functions

- 8.23.1: GENERATE_SERIES

- 8.23.2: INFER_SCHEMA

- 8.23.3: INSPECT_PARQUET

- 8.23.4: LIST_STAGE

- 8.23.5: RESULT_SCAN

- 8.23.6: SHOW_GRANTS

- 8.23.7: STREAM_STATUS

- 8.23.8: TASK_HISTORY

- 8.24: Test Functions

- 8.24.1: SLEEP

- 8.25: UUID Functions

- 8.25.1: GEN_RANDOM_UUID

- 8.25.2: UUID

- 8.26: Window Functions

- 8.26.1: CUME_DIST

- 8.26.2: DENSE_RANK

- 8.26.3: FIRST

- 8.26.4: FIRST_VALUE

- 8.26.5: LAG

- 8.26.6: LAST

- 8.26.7: LAST_VALUE

- 8.26.8: LEAD

- 8.26.9: NTH_VALUE

- 8.26.10: NTILE

- 8.26.11: PERCENT_RANK

- 8.26.12: RANK

- 8.26.13: ROW_NUMBER

- 9: File Management

- 9.1: Adding New Document Accounts

- 9.1.1: Add AWS S3 Account

- 9.1.2: Add Google Cloud Storage Account

- 9.1.3: Add Wasabi Hot Storage Account

- 9.1.4: Add OneDrive Account

- 9.2: Account and Access Management

- 9.2.1: Control Document Account Access

- 9.2.2: Document Temporary Storage

- 9.2.3: Managing Document Account Backups

- 9.2.4: Managing Document Account Owners

- 9.2.5: Using Start Paths in Document Accounts

- 9.3: Using Document Accounts

- 10: How To

- 11: Identity and Access Management (IAM)

- 11.1: Overview

- 11.1.1: Organizations and Workspaces Explained

- 11.1.2: Viewing and Managing Workspaces

- 11.1.3: Managing Workspace Members

- 11.2: Managing Security Groups and Assignments

- 11.3: Member (User) Identity

- 11.4: Member Management

- 11.5: Member Authentication

- 11.6: Advanced Operations

- 11.6.1: Setting Up Auth0 SAML for Single Sign-On

- 11.6.2: Setting Up AWS IAM Identity Center SAML for Single Sign-On

- 11.6.3: Setting Up Google Workspace SAML for Single Sign-On

- 11.6.4: Setting Up Microsoft Entra ID SAML for Single Sign-On

- 11.6.5: Setting Up Okta SAML for Single Sign-On

- 11.6.6: Manage Organization Administrators

- 11.6.7: Managing Single Sign-On for Organization

- 11.6.8: Setting Member Expiration Period

- 12: Jupyter Notebooks and Command Line Interfaces

- 12.1: Jupyter Notebooks

- 12.2: Command Line

- 12.3: OAuth Tokens

- 13: Panel Apps

- 14: PlaidLink

- 14.1: PlaidLink Agents

- 14.2: Installation

- 14.3: Configure

- 14.4: Upgrade

- 15: PlaidXL

- 15.1: Installation

- 15.2: Connecting

- 15.3: Working with Data

- 16: Projects

- 16.1: Viewing Projects

- 16.2: Managing Projects

- 16.3: Managing Tables and Views

- 16.4: Managing Hierarchies

- 16.5: Managing Data Editors

- 16.6: Archive a Project

- 16.7: Viewing the Project Log

- 17: PySpark and Spark Compute Clusters

- 18: Scheduled Workflows

- 18.1: Event Scheduler

- 19: Workflow Steps

- 19.1: Workflow Control Steps

- 19.1.1: Create Workflow

- 19.1.2: Run Workflow

- 19.1.3: Stop Workflow

- 19.1.4: Copy Workflow

- 19.1.5: Rename Workflow

- 19.1.6: Delete Workflow

- 19.1.7: Set Project Variable

- 19.1.8: Set Workflow Variable

- 19.1.9: Worklow Loop

- 19.1.10: Raise Workflow Error

- 19.1.11: Clear Workflow Log

- 19.2: Import Steps

- 19.2.1: Import Archive

- 19.2.2: Import CSV

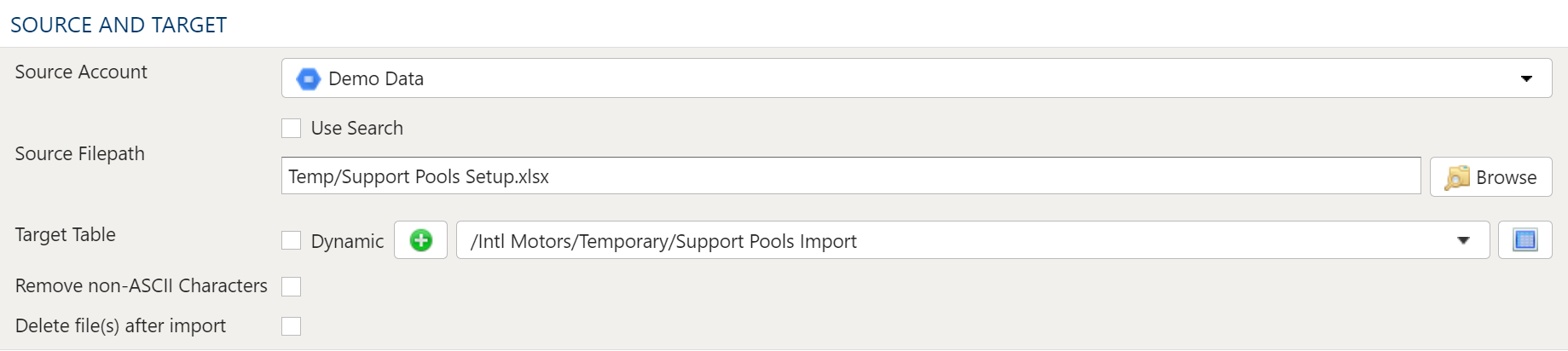

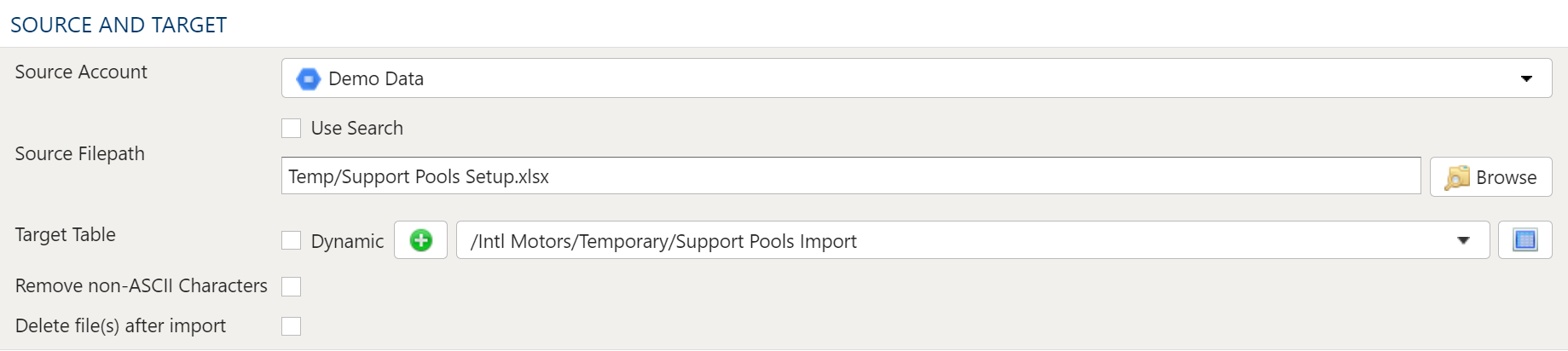

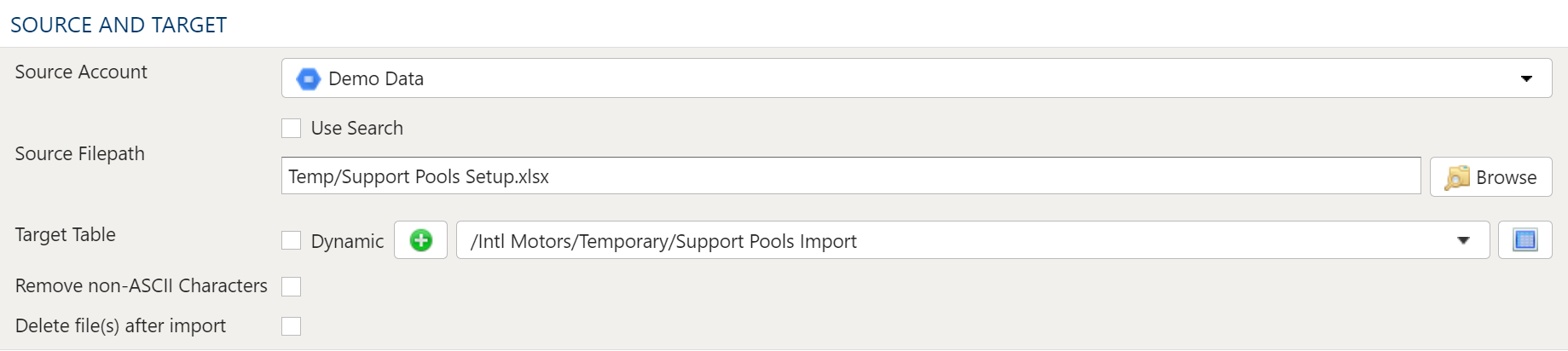

- 19.2.3: Import Excel

- 19.2.4: Import External Database Tables

- 19.2.5: Import Fixed Width

- 19.2.6: Import Google BigQuery

- 19.2.7: Import Google Spreadsheet

- 19.2.8: Import HDF

- 19.2.9: Import HTML

- 19.2.10: Import JSON

- 19.2.11: Import Project Table

- 19.2.12: Import Quandl

- 19.2.13: Import SAS7BDAT

- 19.2.14: Import SPSS

- 19.2.15: Import SQL

- 19.2.16: Import Stata

- 19.2.17: Import XML

- 19.2.18: Import Alteryx YXDB

- 19.2.19: Import Parquet

- 19.2.20: Import Avro

- 19.3: Export Steps

- 19.3.1: Export to CSV

- 19.3.2: Export to Excel

- 19.3.3: Export to External Project Table

- 19.3.4: Export to Google Spreadsheet

- 19.3.5: Export to HDF

- 19.3.6: Export to HTML

- 19.3.7: Export to JSON

- 19.3.8: Export to Quandl

- 19.3.9: Export to SQL

- 19.3.10: Export to Table Archive

- 19.3.11: Export to XML

- 19.4: Table Steps

- 19.4.1: Table Anti Join

- 19.4.2: Table Append

- 19.4.3: Table Clear

- 19.4.4: Table Copy

- 19.4.5: Table Cross Join

- 19.4.6: Table Drop

- 19.4.7: Table Extract

- 19.4.8: Table Faker

- 19.4.9: Table In-Place Delete

- 19.4.10: Table In-Place Update

- 19.4.11: Table Inner Join

- 19.4.12: Table Lookup

- 19.4.13: Table Melt

- 19.4.14: Table Outer Join

- 19.4.15: Table Pivot

- 19.4.16: Table Union All

- 19.4.17: Table Union Distinct

- 19.4.18: Table Upsert

- 19.5: Dimension Steps

- 19.5.1: Dimension Clear

- 19.5.2: Dimension Create

- 19.5.3: Dimension Delete

- 19.5.4: Dimension Export

- 19.5.5: Dimension Load

- 19.5.6: Dimension Sort

- 19.6: Document Steps

- 19.6.1: Compress PDF

- 19.6.2: Concatenate Files

- 19.6.3: Convert Document Encoding

- 19.6.4: Convert Document Encoding to ASCII

- 19.6.5: Convert Document Encoding to UTF-8

- 19.6.6: Convert Document Encoding to UTF-16

- 19.6.7: Convert Image to PDF

- 19.6.8: Convert PDF or Image to JPEG

- 19.6.9: Copy Document Directory

- 19.6.10: Copy Document File

- 19.6.11: Create Document Directory

- 19.6.12: Crop Image to Headshot

- 19.6.13: Delete Document Directory

- 19.6.14: Delete Document File

- 19.6.15: Document Text Substitution

- 19.6.16: Fix File Extension

- 19.6.17: Merge Multiple PDFs

- 19.6.18: Rename Document Directory

- 19.6.19: Rename Document File

- 19.7: Notification Steps

- 19.7.1: Notify Distribution Group

- 19.7.2: Notify Agent

- 19.7.3: Notify Via Email

- 19.7.4: Notify Via Log

- 19.7.5: Notify via Microsoft Teams

- 19.7.6: Notify via Slack

- 19.7.7: Notify Via SMS

- 19.7.8: Notify Via Twitter

- 19.7.9: Notify Via Web Hook

- 19.8: Agent Steps

- 19.8.1: Agent Remote Execution of SQL

- 19.8.2: Agent Remote Export of SQL Result

- 19.8.3: Agent Remote Import Table into SQL Database

- 19.8.4: Document - Remote Delete File

- 19.8.5: Document - Remote Export File

- 19.8.6: Document - Remote Import File

- 19.8.7: Document - Remote Rename File

- 19.9: General Steps

- 19.9.1: Pass

- 19.9.2: Run Remote Python

- 19.9.3: User Defined Transform

- 19.9.4: Wait

- 19.10: PDF Reporting Steps

- 19.10.1: Report Single

- 19.10.2: Reports Batch

- 19.11: Common Step Operations

- 19.11.1: Advanced Data Mapper Usage

- 19.12: Allocation By Assignment Dimension

- 19.13: Allocation Split

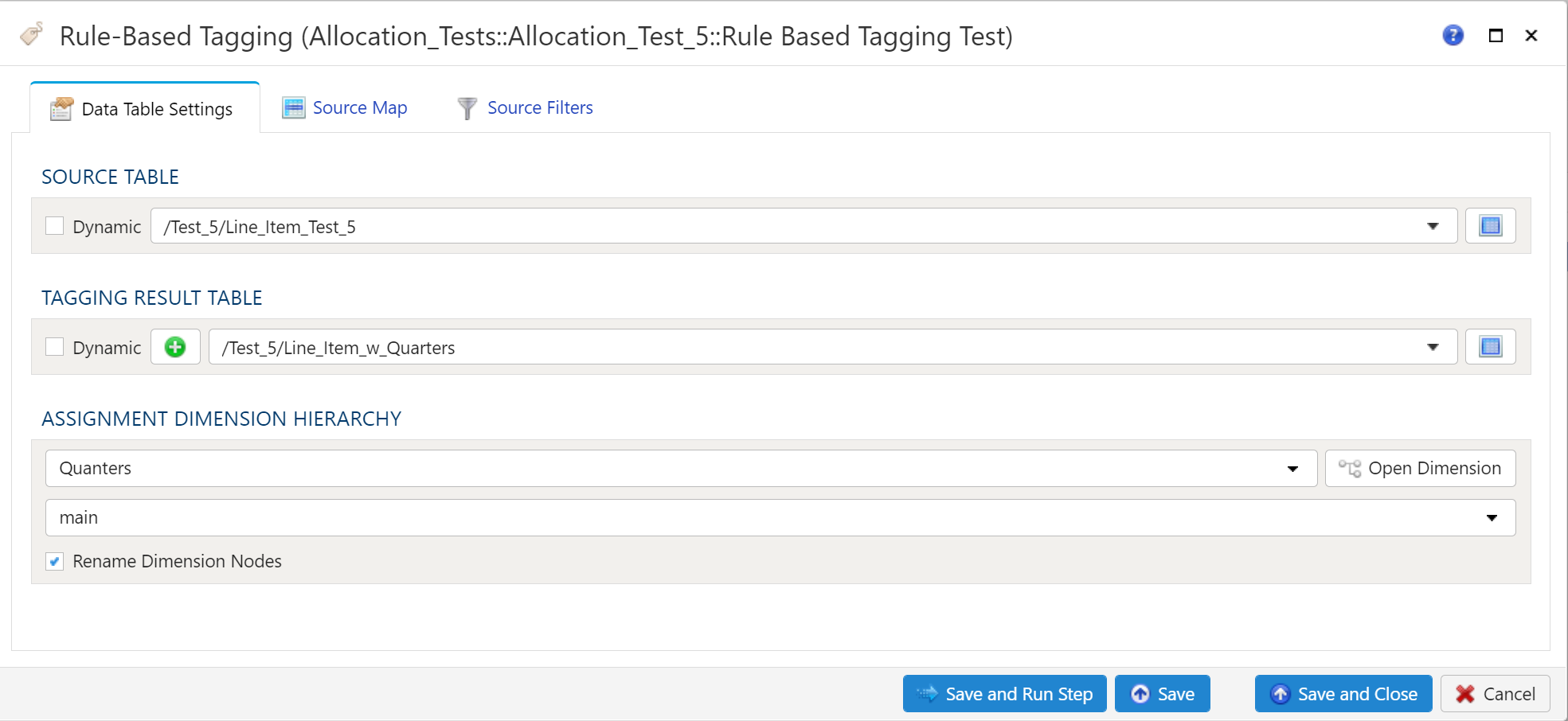

- 19.14: Rule-Based Tagging

- 19.15: SAP ECC and S/4HANA Steps

- 19.15.1: Call SAP Financial Document Attachment

- 19.15.2: Call SAP General Ledger Posting

- 19.15.3: Call SAP Master Data Table RFC

- 19.15.4: Call SAP RFC

- 19.16: SAP PCM Steps

- 19.16.1: Create SAP PCM Model

- 19.16.2: Delete SAP PCM Model

- 19.16.3: Calculate PCM Model

- 19.16.4: Copy SAP PCM Model

- 19.16.5: Copy SAP PCM Period

- 19.16.6: Copy SAP PCM Version

- 19.16.7: Rename SAP PCM Model

- 19.16.8: Run SAP PCM Console Job

- 19.16.9: Run SAP PCM Hyper Loader

- 19.16.10: Stop PCM Model Calculation

- 20: Workflows

- 20.1: Where are the Workflows

- 20.2: Workflow Explorer

- 20.3: Create Workflow

- 20.4: Duplicate a Workflow

- 20.5: Copy & Paste steps

- 20.6: Change the order of steps in a workflow

- 20.7: Run a workflow

- 20.8: Running one step in a workflow

- 20.9: Running a range of steps in a workflow

- 20.10: Managing Step Errors

- 20.11: Continue on Error

- 20.12: Skip steps in a workflow

- 20.13: Conditional Step Execution

- 20.14: Controlling Parallel Execution

- 20.15: Manage Workflow Variables

- 20.16: Viewing Workflow Log

- 20.17: View Workflow Report

- 20.18: View a dependency audit

1 - Allocation Assignments

1.1 - Getting Started

1.1.1 - Allocations Quick Start

Documentation coming soon...

1.1.2 - Rule Based Tagging

Documentation coming soon...

1.1.3 - Why are Allocations Useful

Documentation coming soon...

1.2 - Configure Allocations

1.2.1 - Configure an Allocation

Purpose

Allocations enable values (typically costs) to be shredded to a more-granular level by applying a driver. Allocations are used to for a multitude of purposes. including but not limited to Activity-Based Costing, IT & Shared Service Chargeback, calculation of fully loaded cost to produce and provide a good or service to customers, etc. They are a fundamental tool for financial analysis, and a cornerstone for managerial reporting operations such as Customer & Product Profitability. They are also a useful construct for establishing and managing global Intercompany Transfer Prices for goods and services.

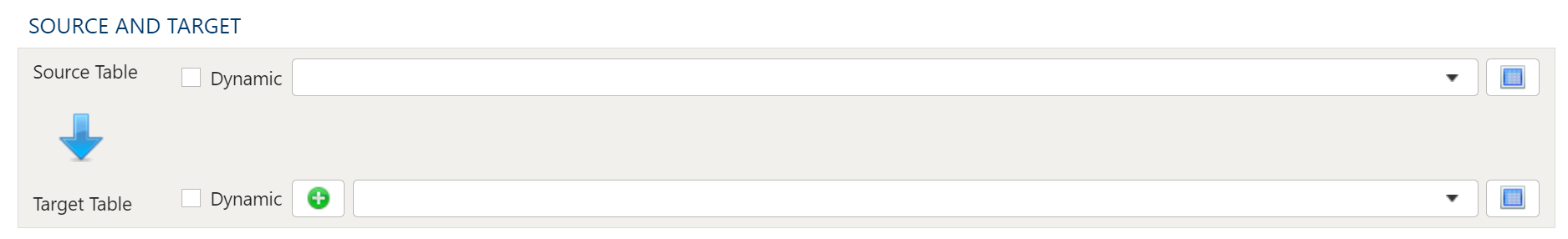

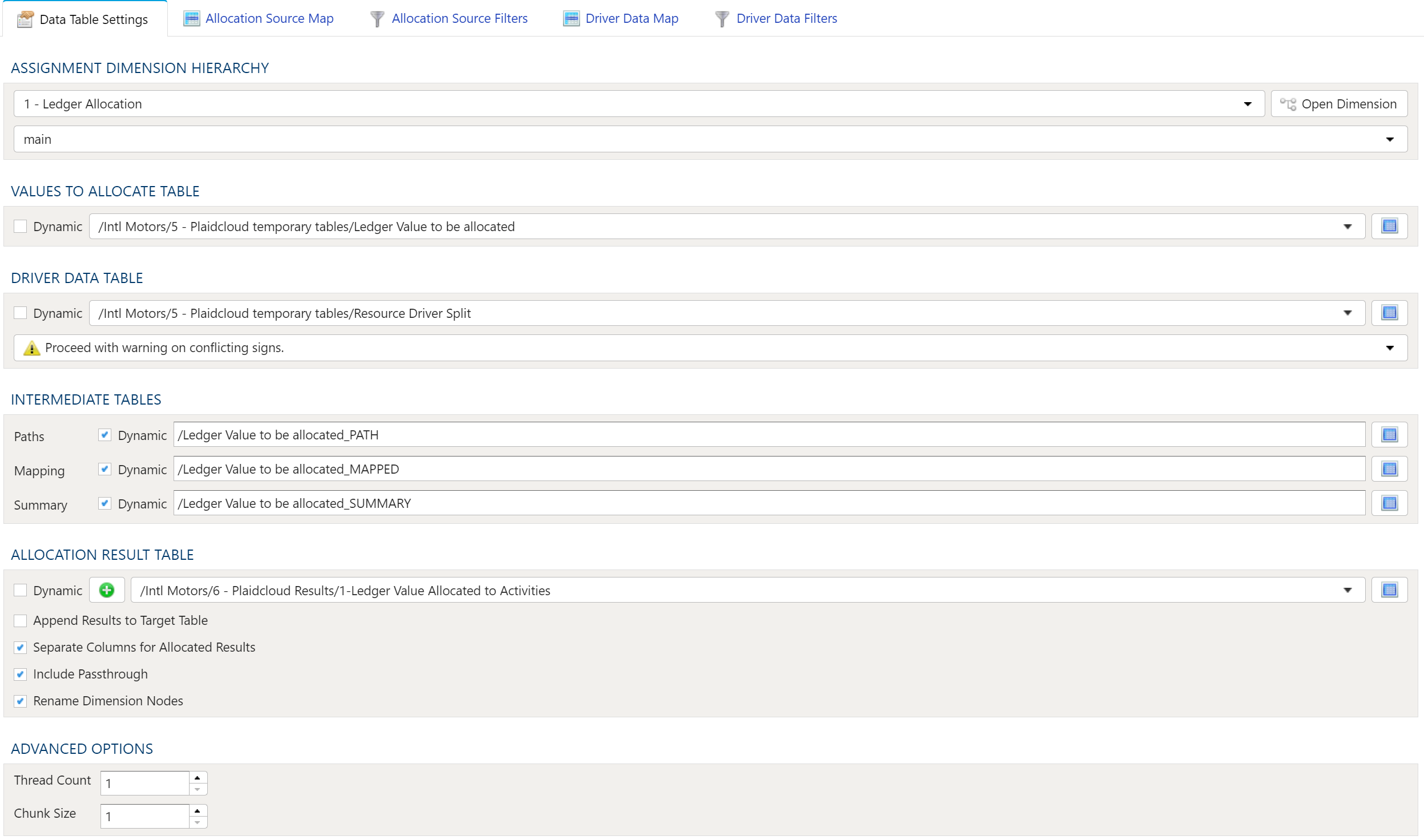

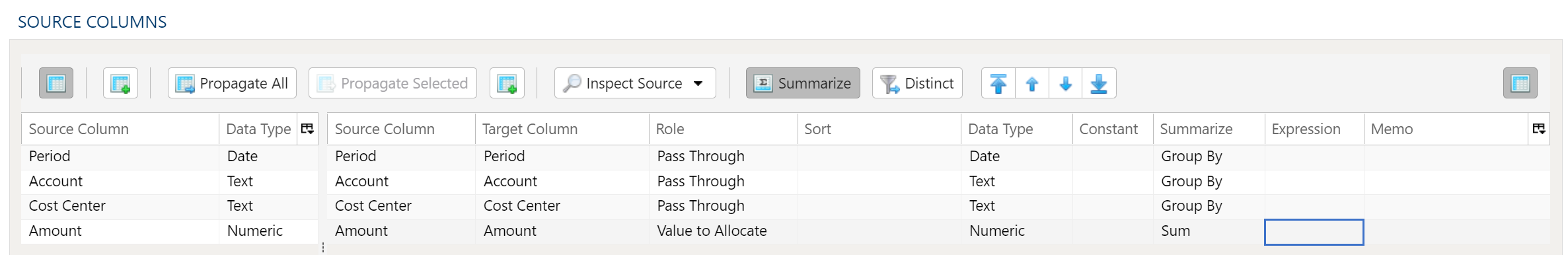

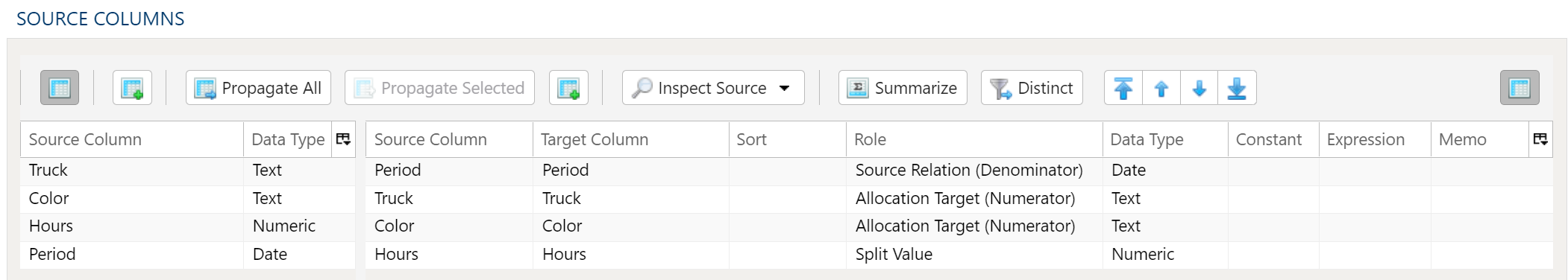

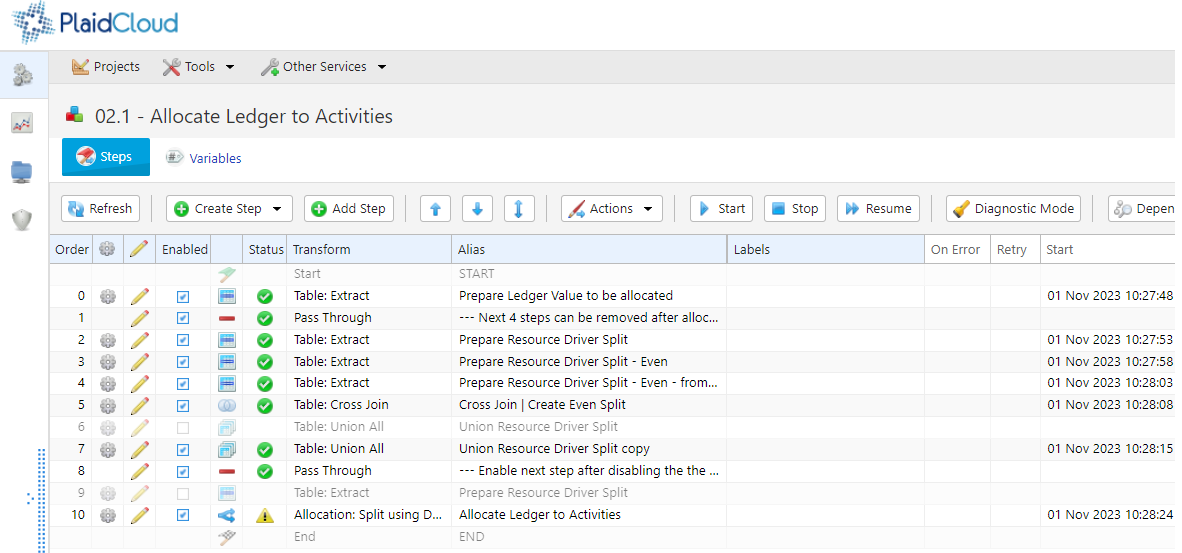

Setting up the Allocation transform

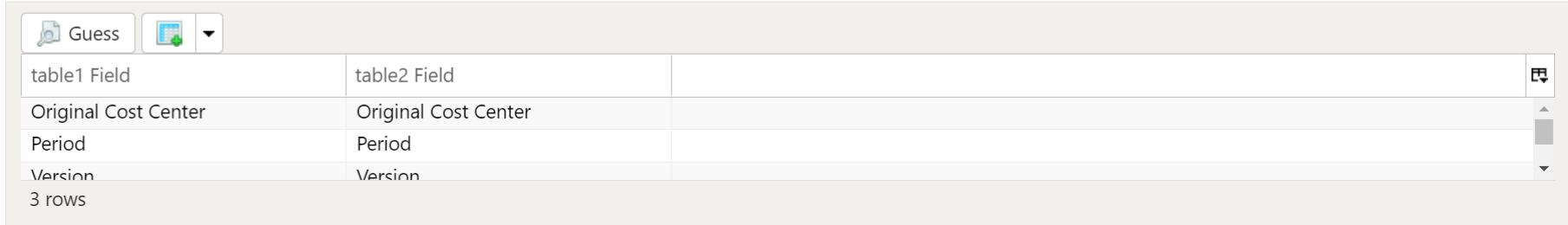

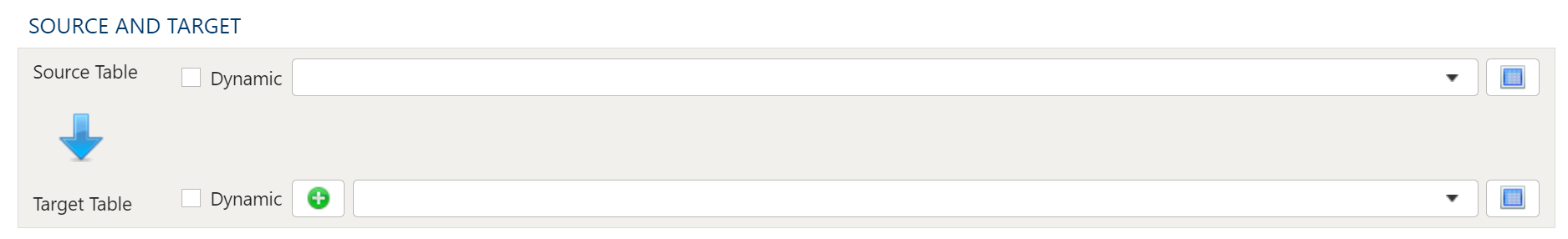

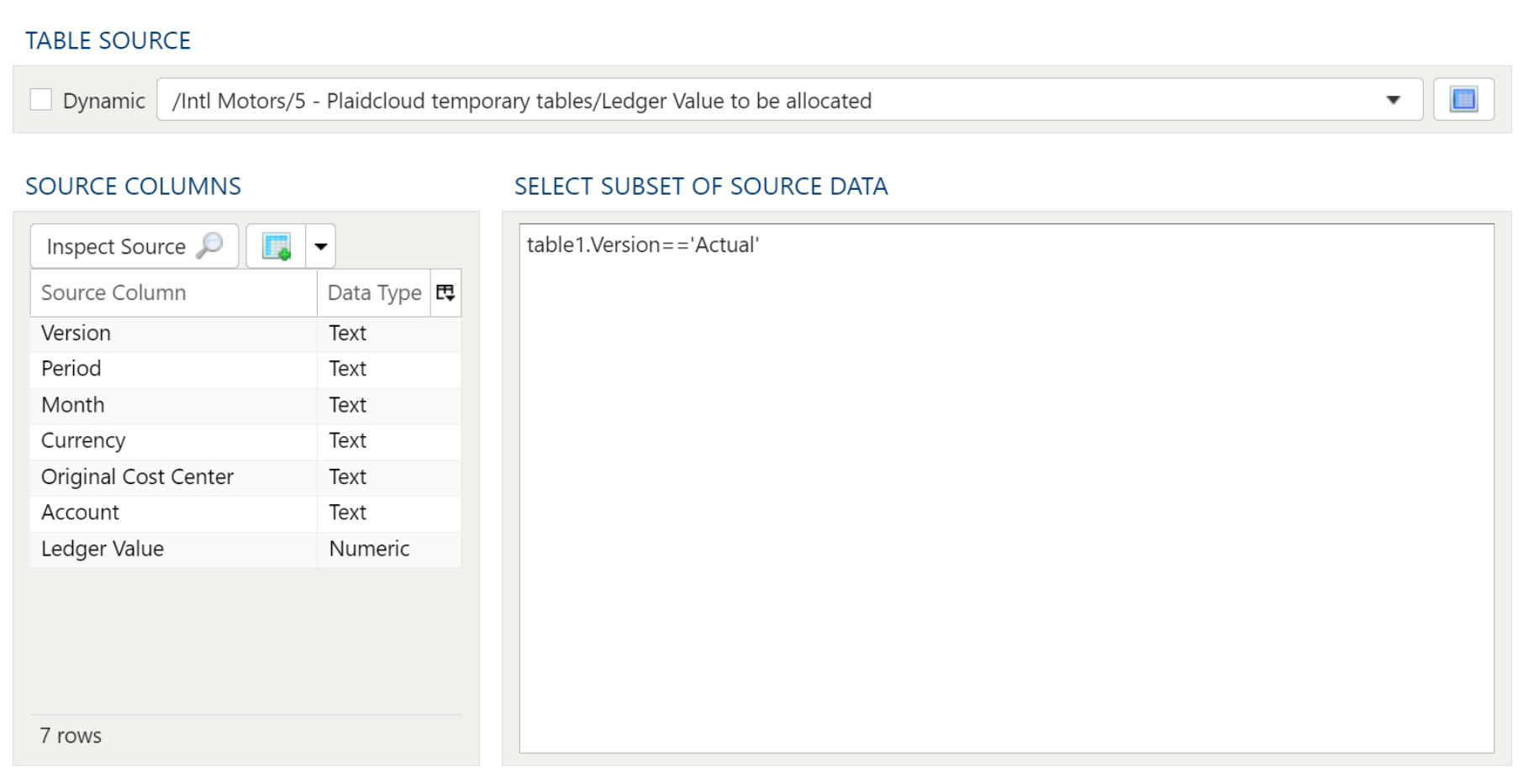

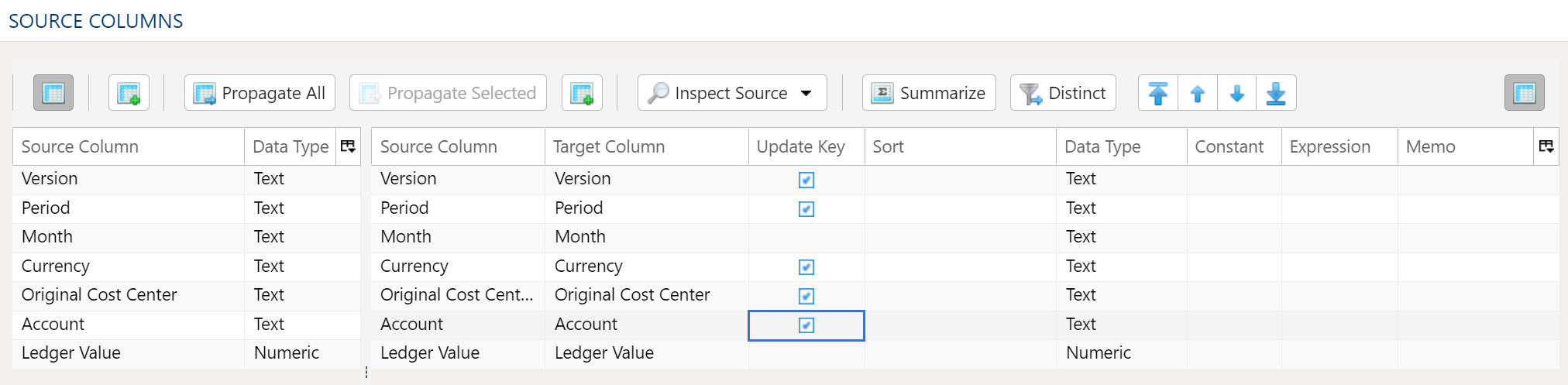

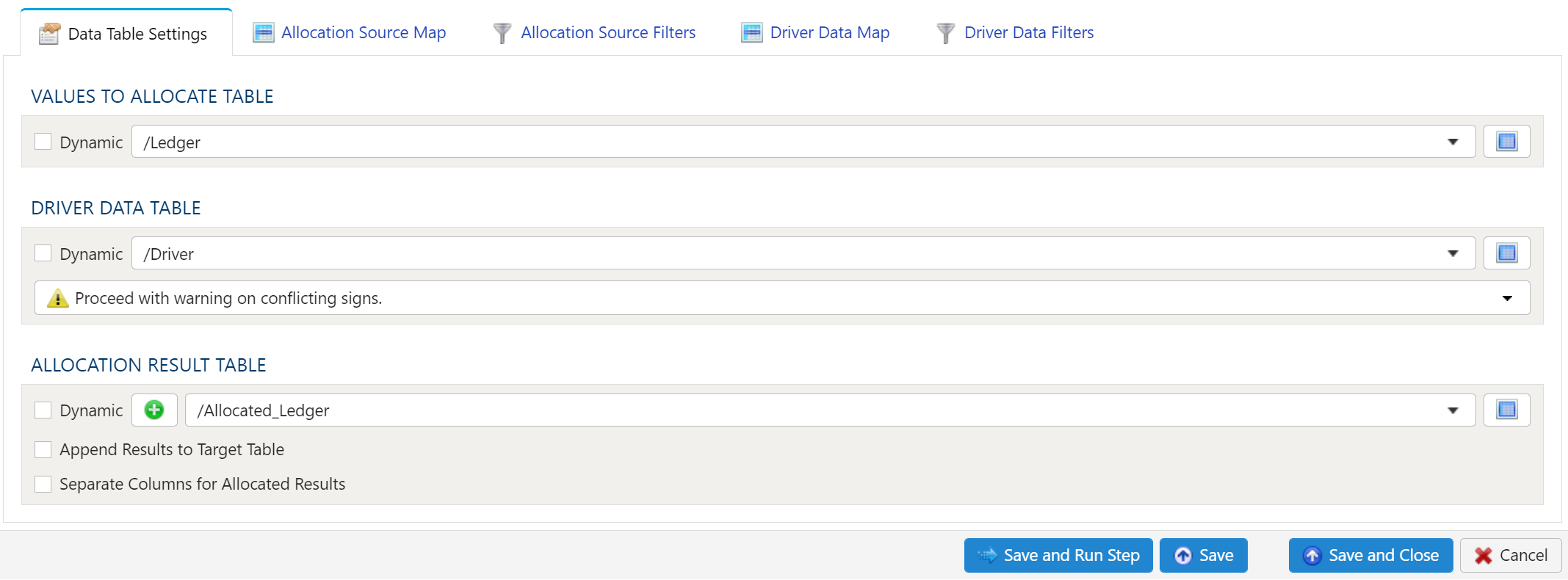

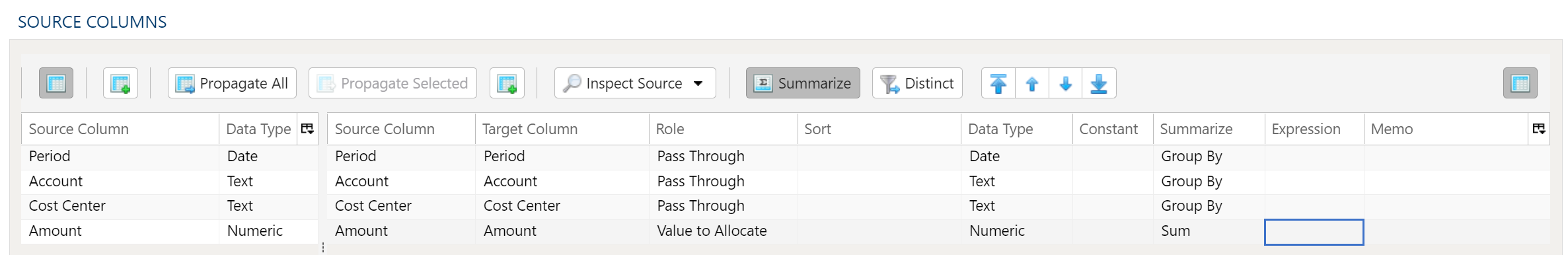

From a practical purpose, allocations are set up in PlaidCloud in similar fashion as other data transforms such as joins and lookups. Four configuration parameters must be set in order for an Allocation transform to succeed.

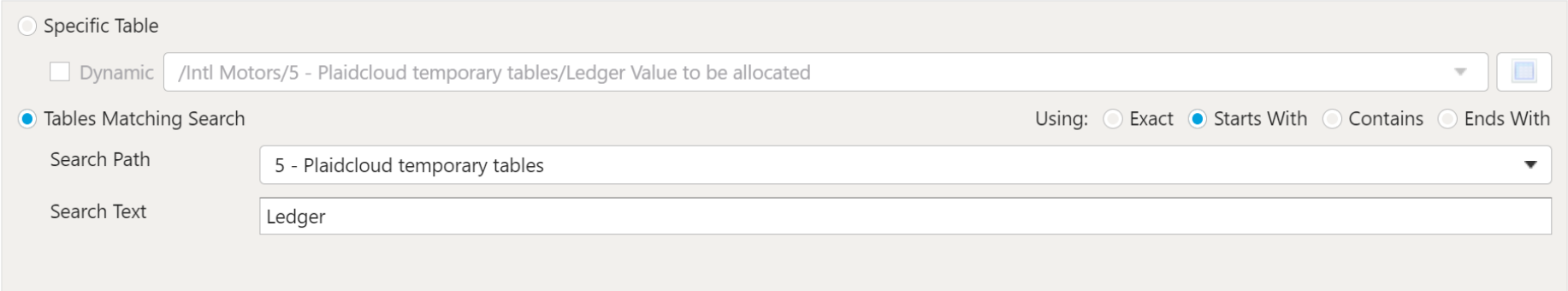

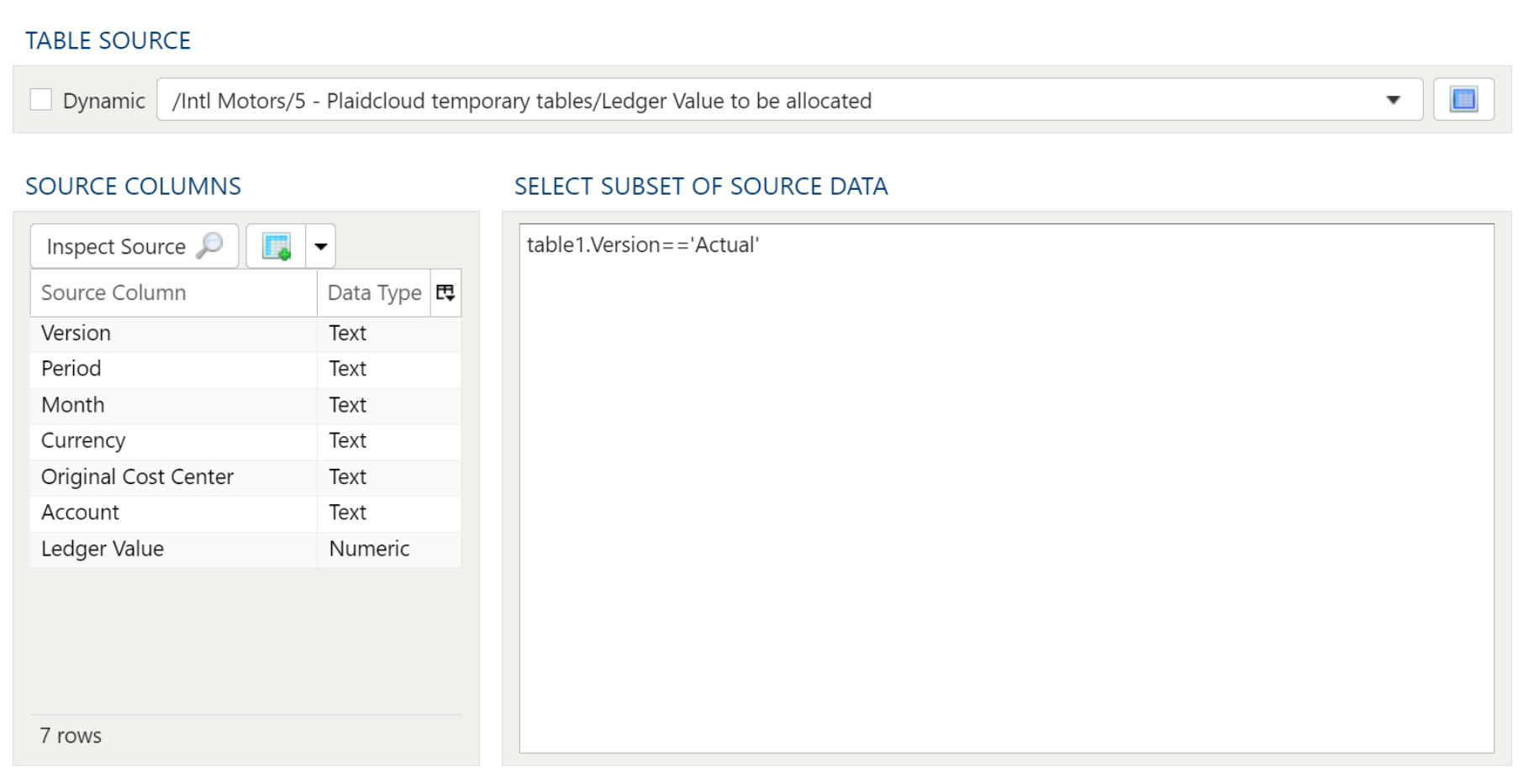

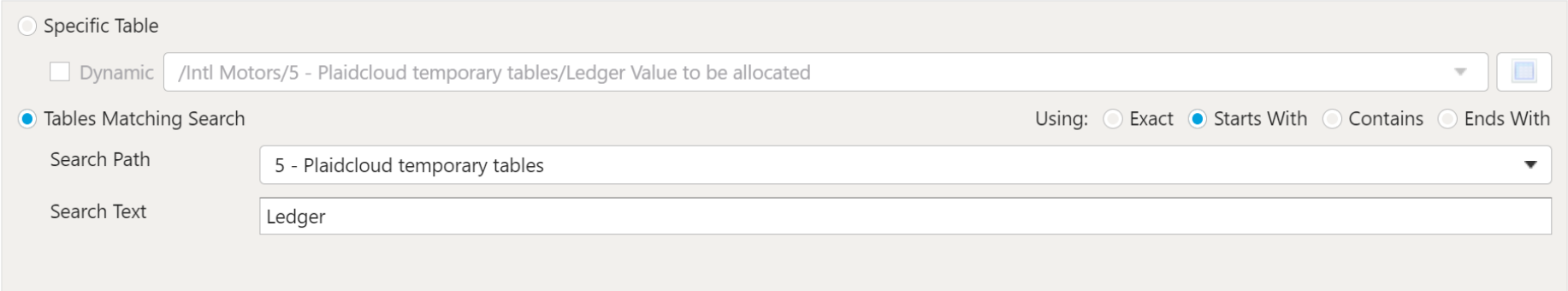

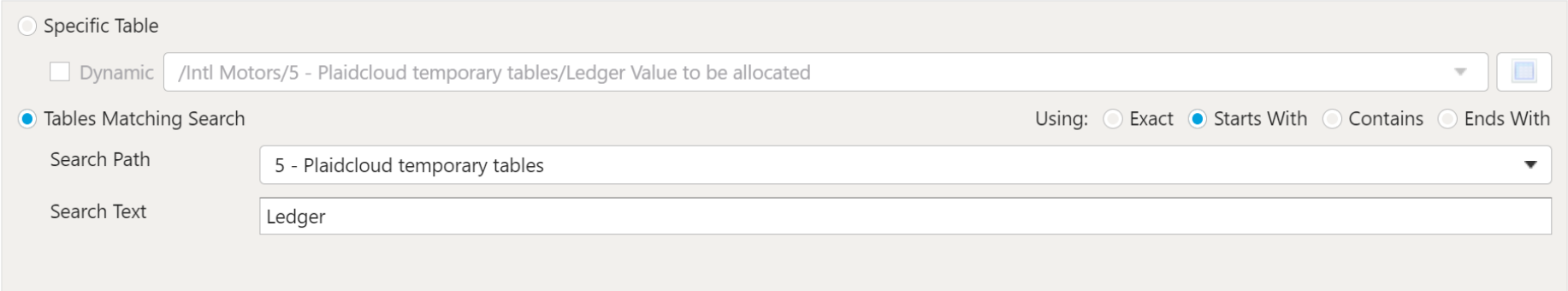

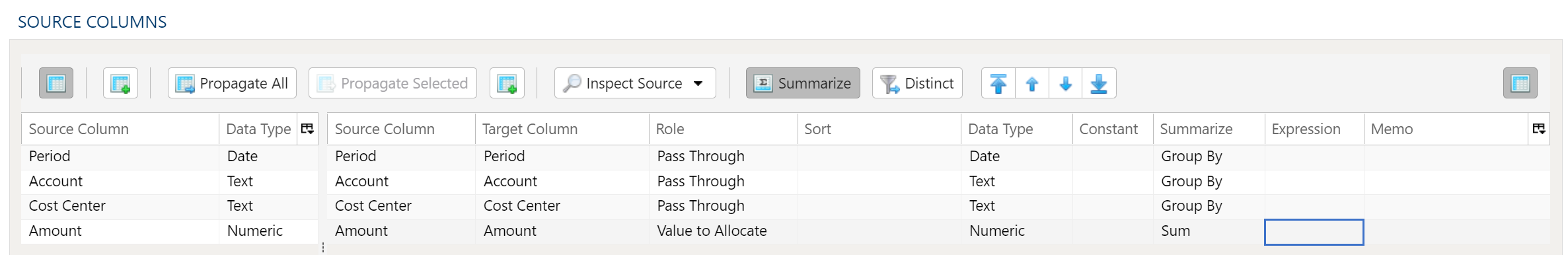

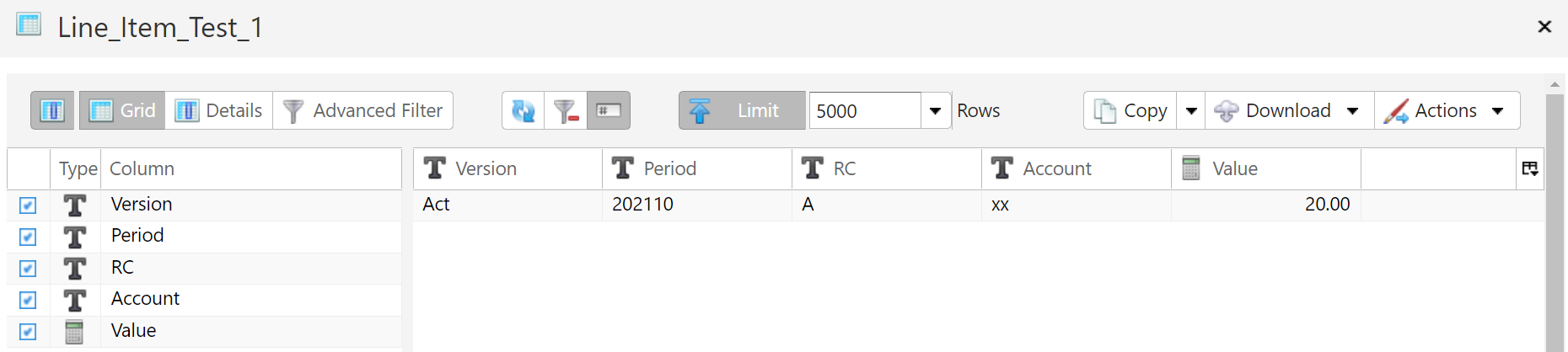

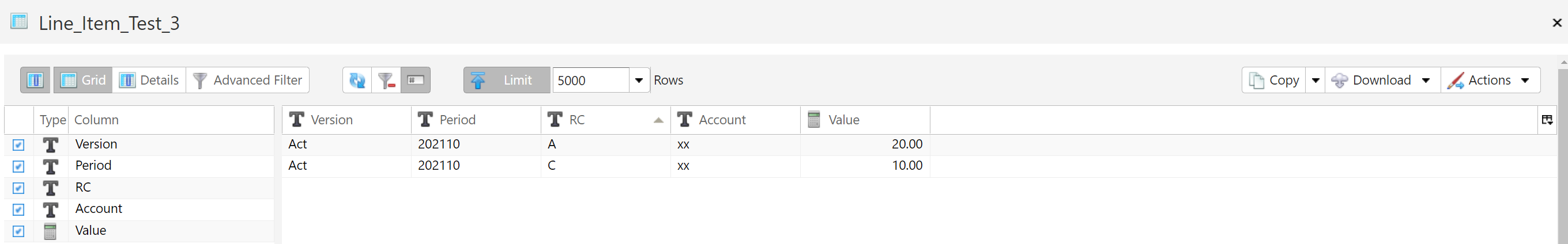

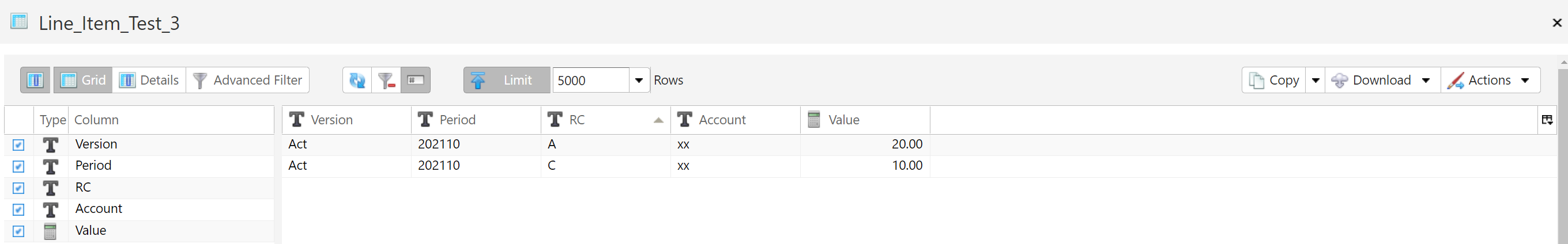

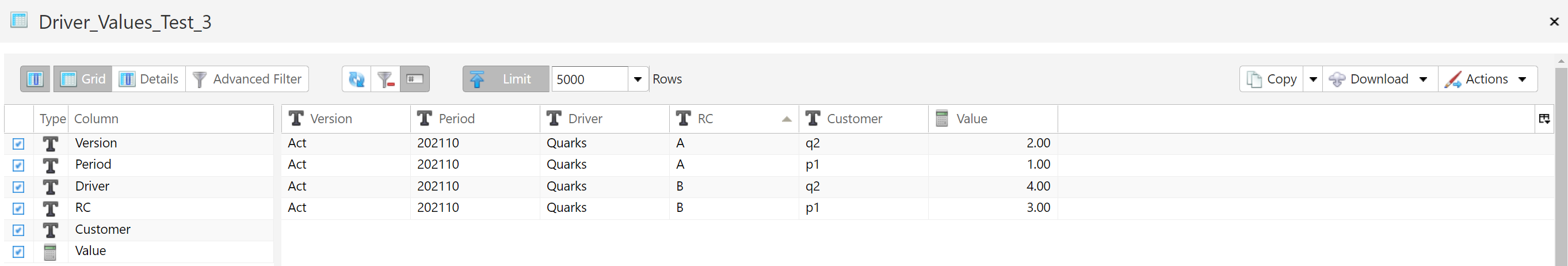

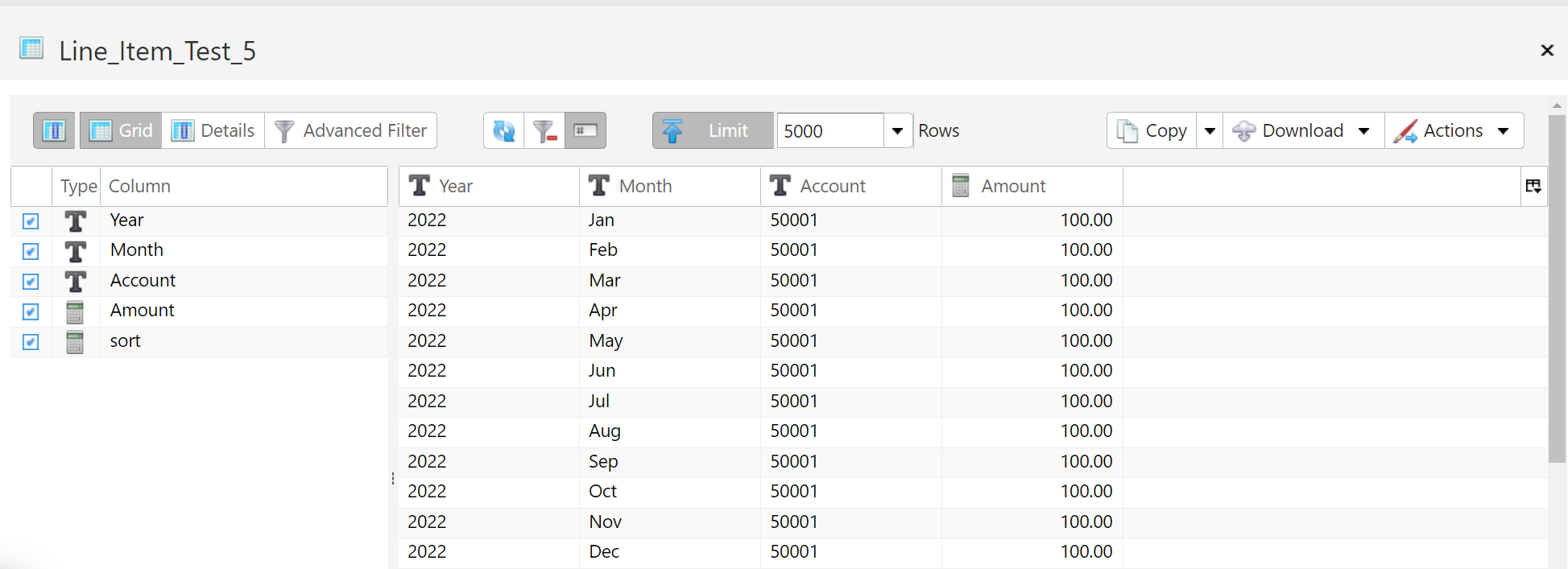

- Specify Preallocated Data: Specify the preallocated data table in the Values To Allocate Table section of the allocation transform.

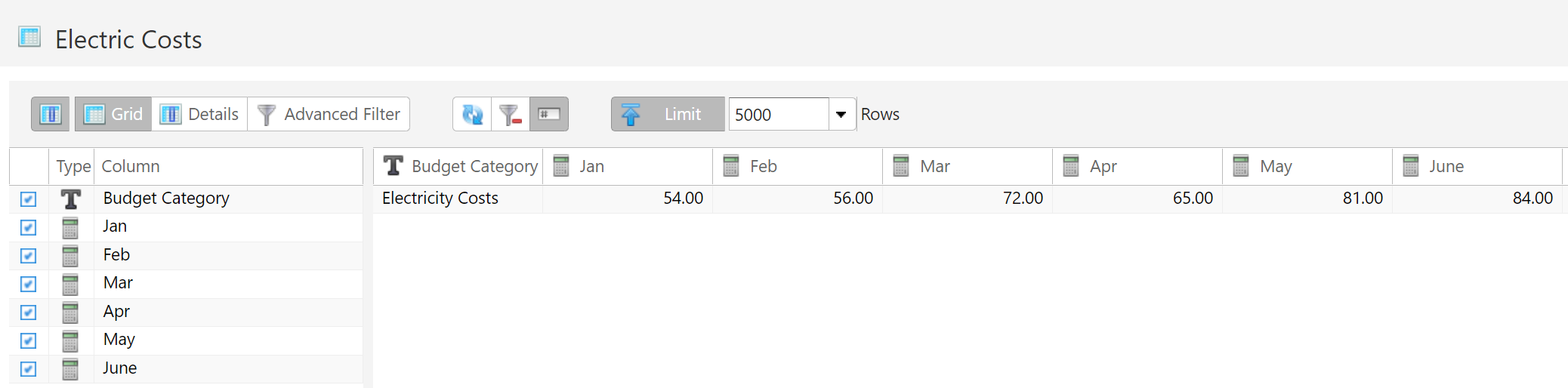

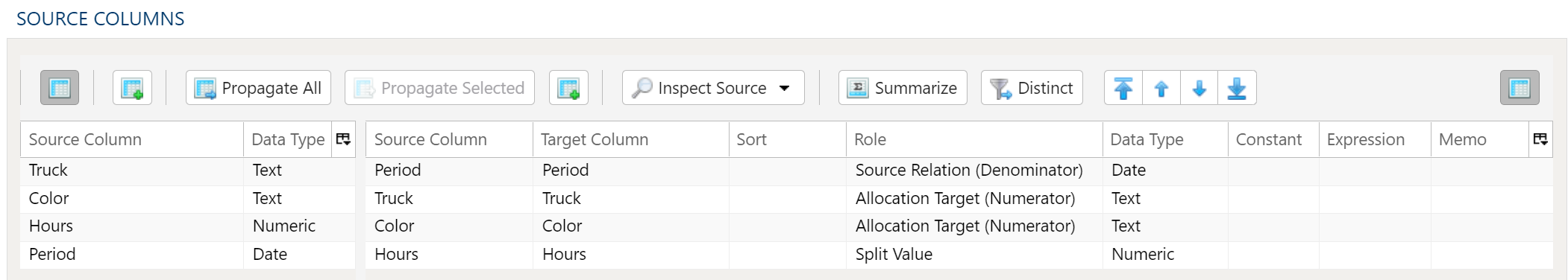

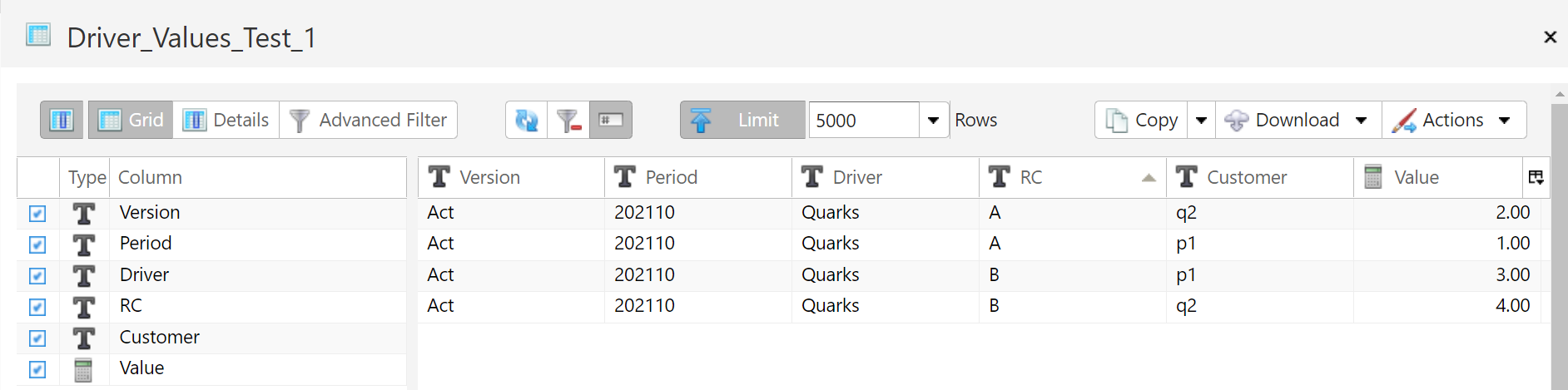

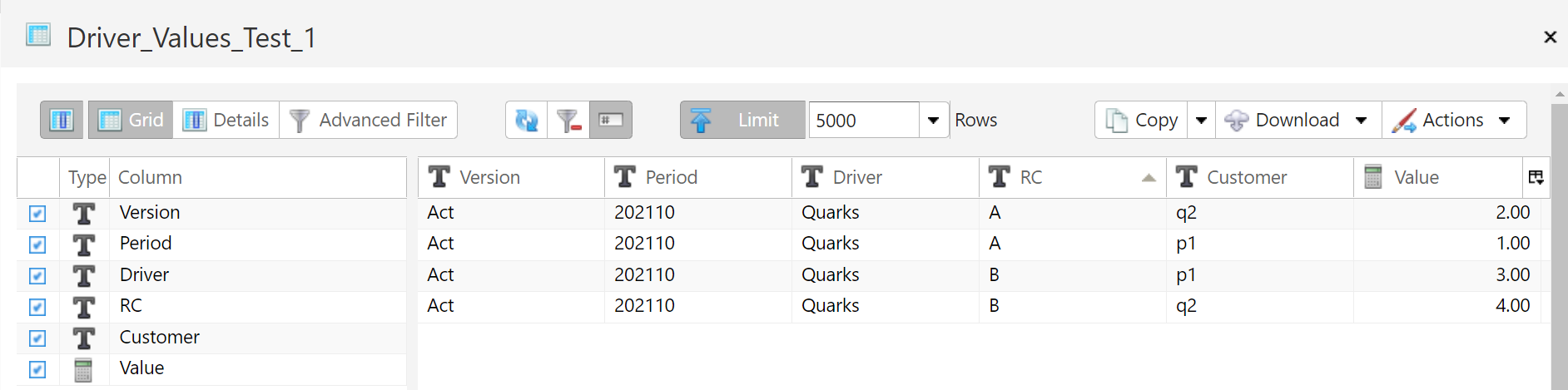

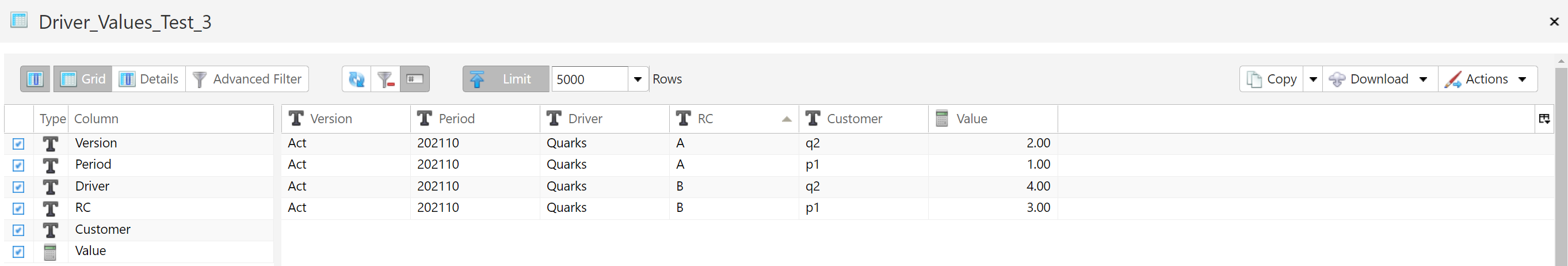

- Specify Driver Data: Driver data will serve as the basis for the ratios used in the allocation. Choose the driver data table in the Driver Data Table section of the allocation transform.

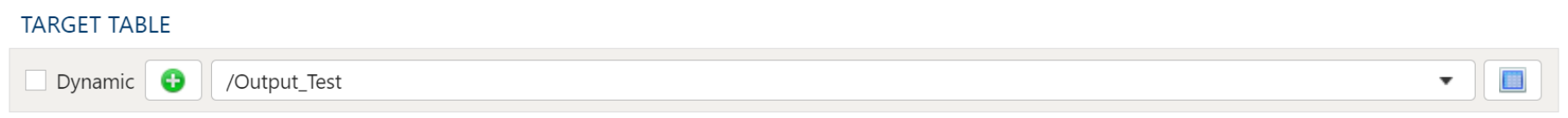

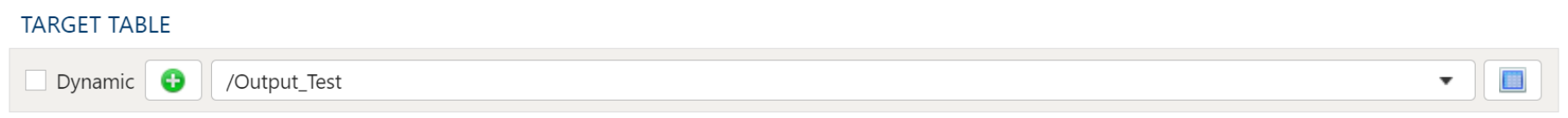

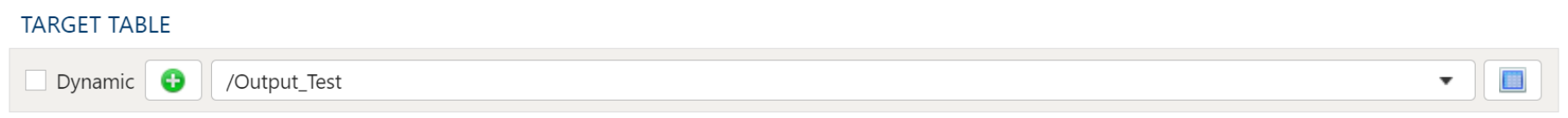

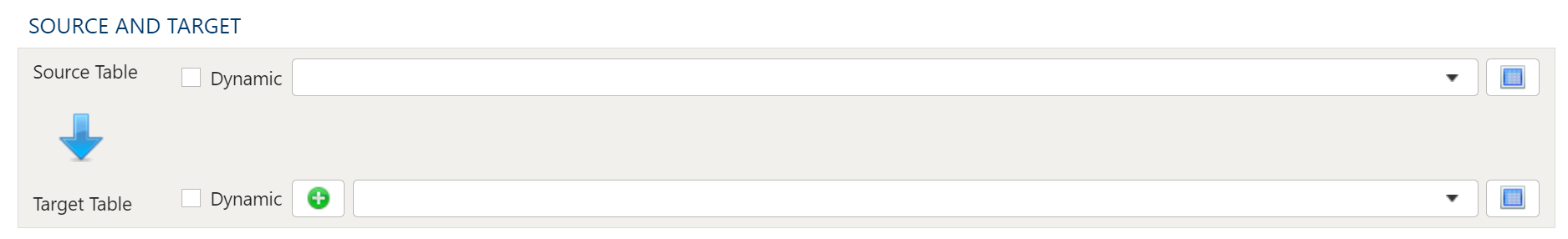

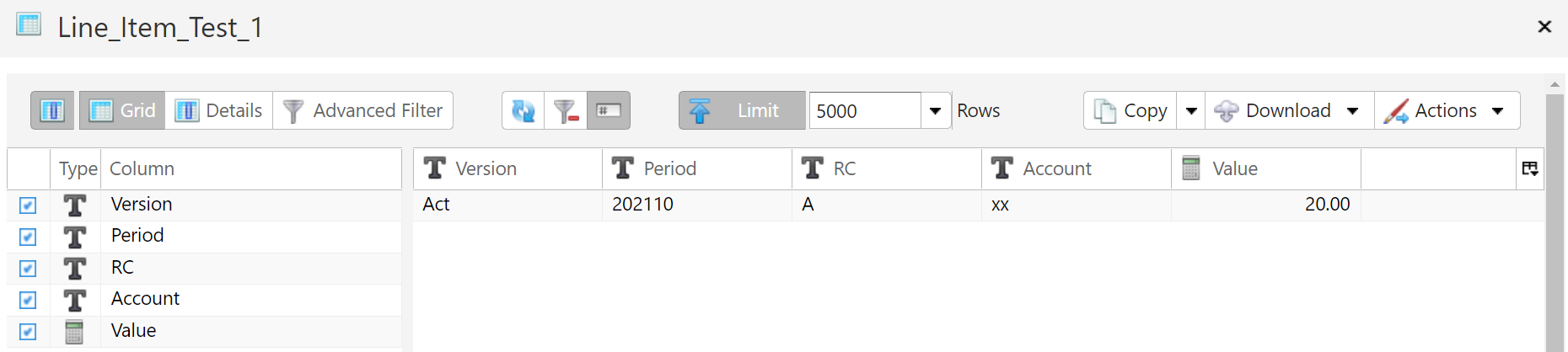

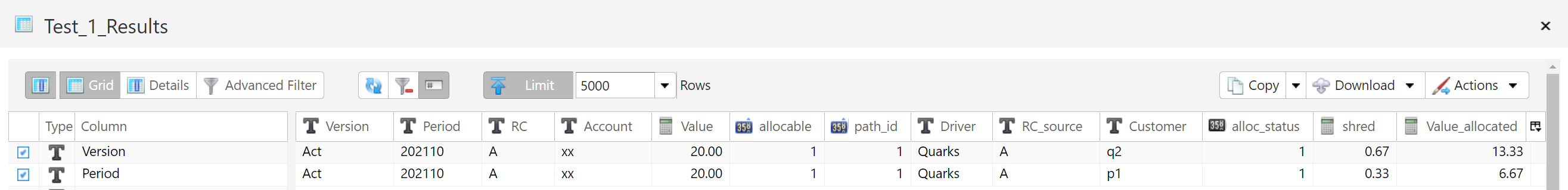

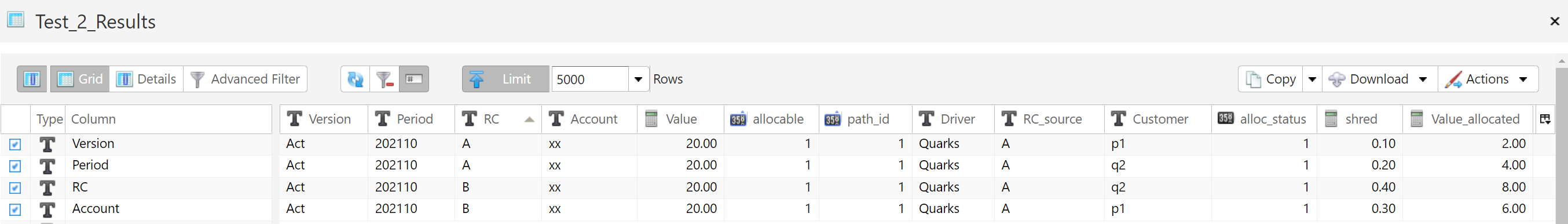

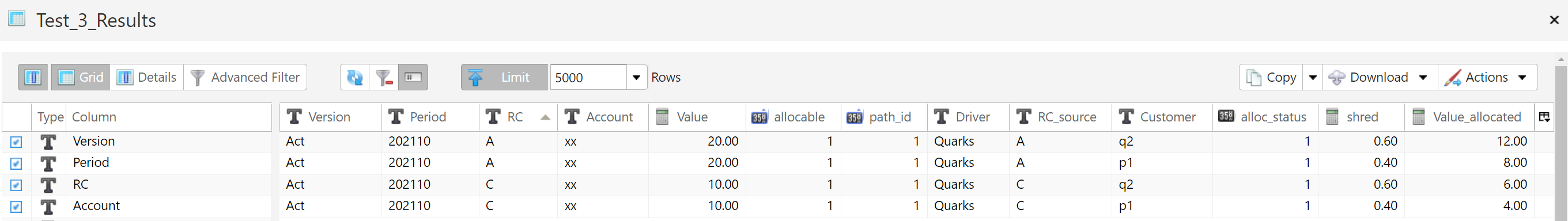

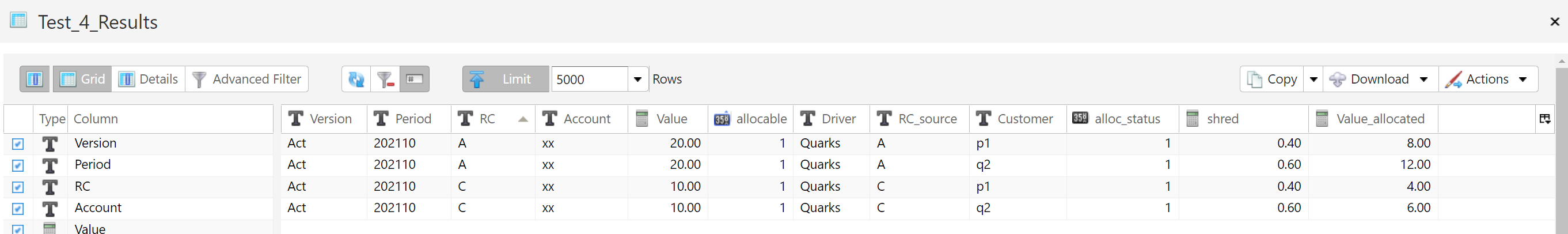

- Specify the Results Table: Post-allocated data must be stored in a table. Specify the table in the Allocation Result Table section of the allocation result section of the transform.

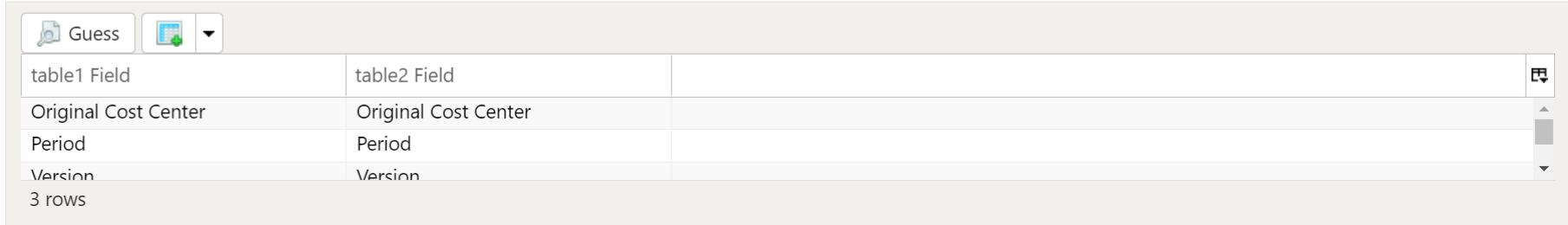

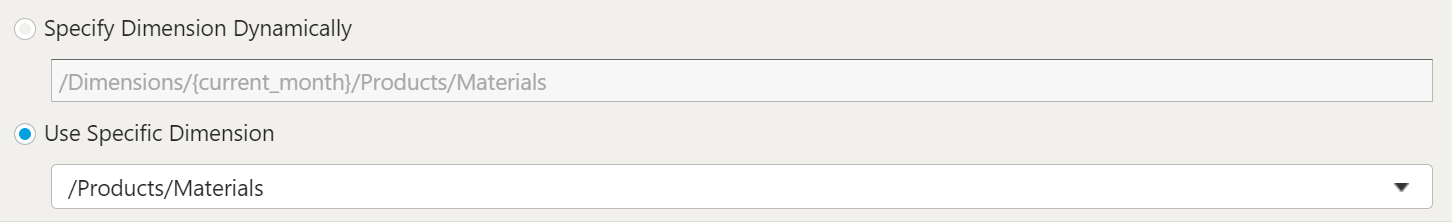

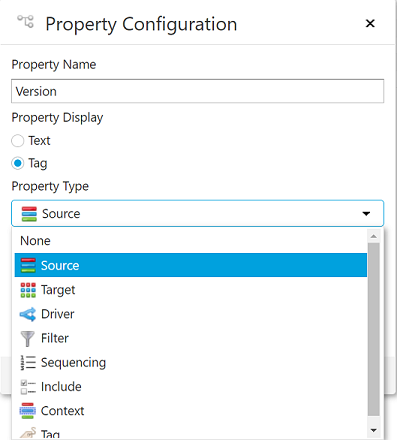

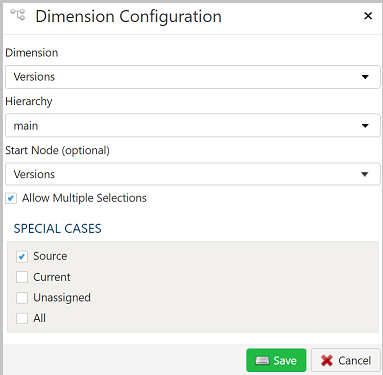

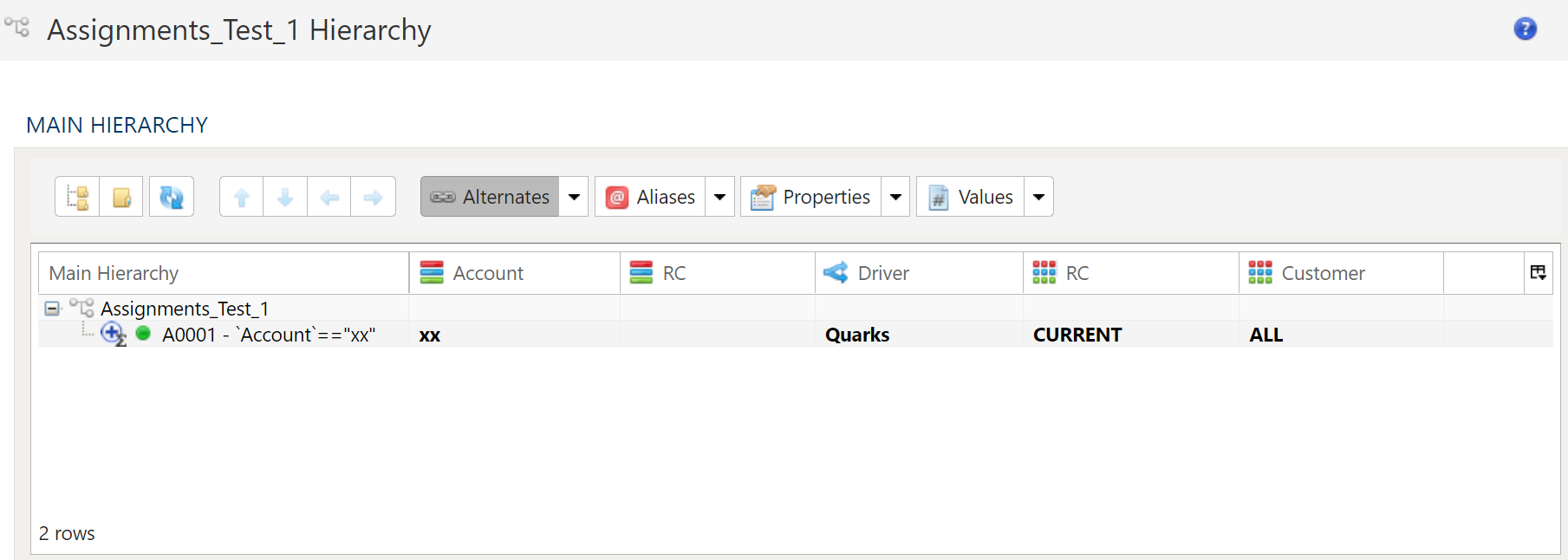

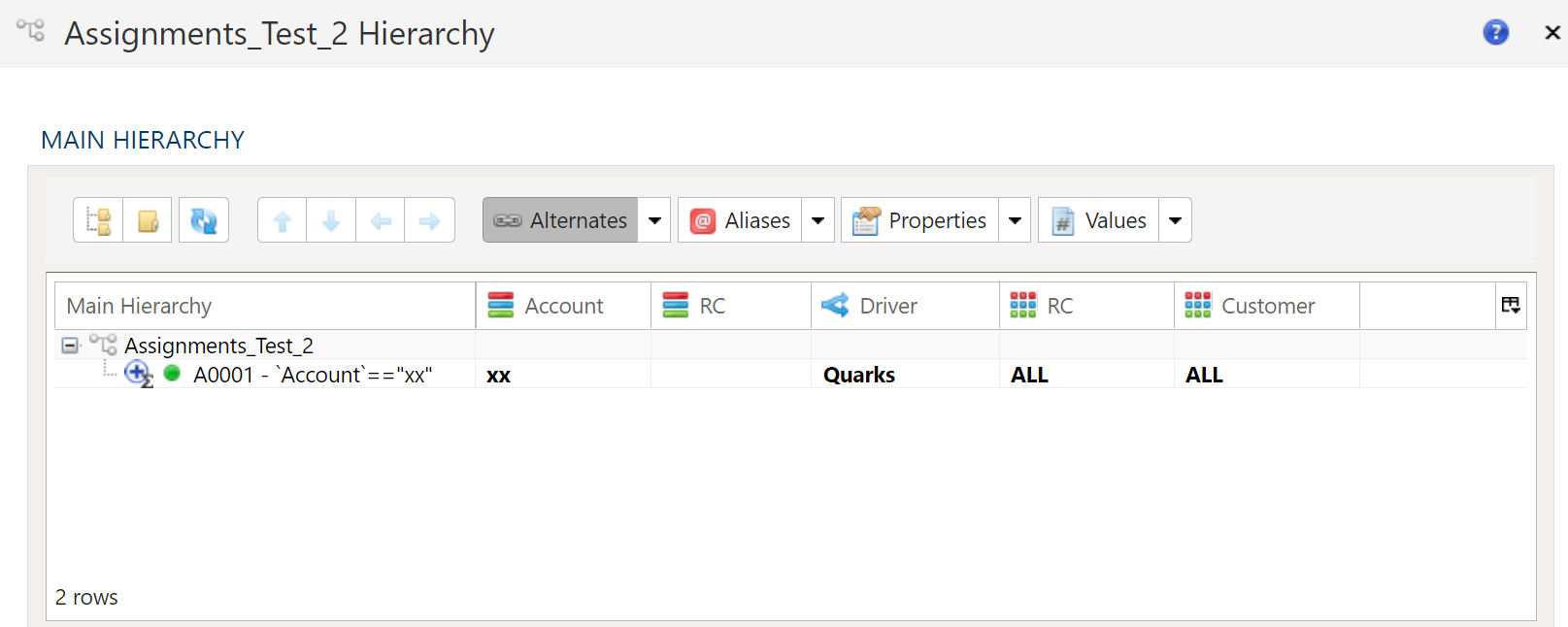

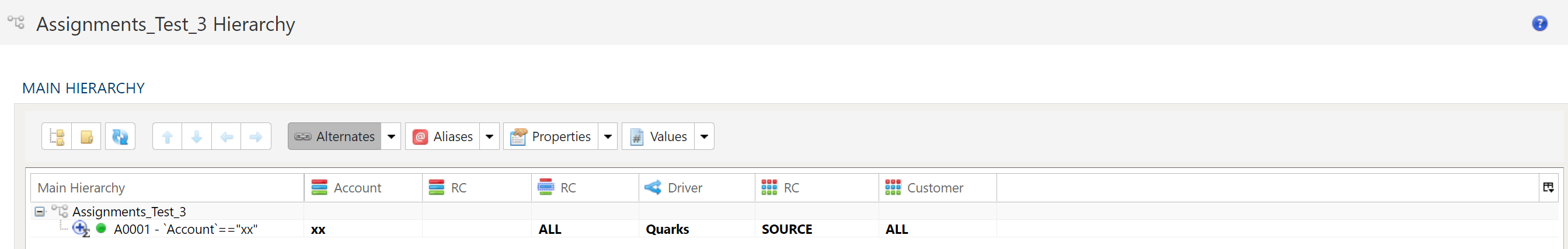

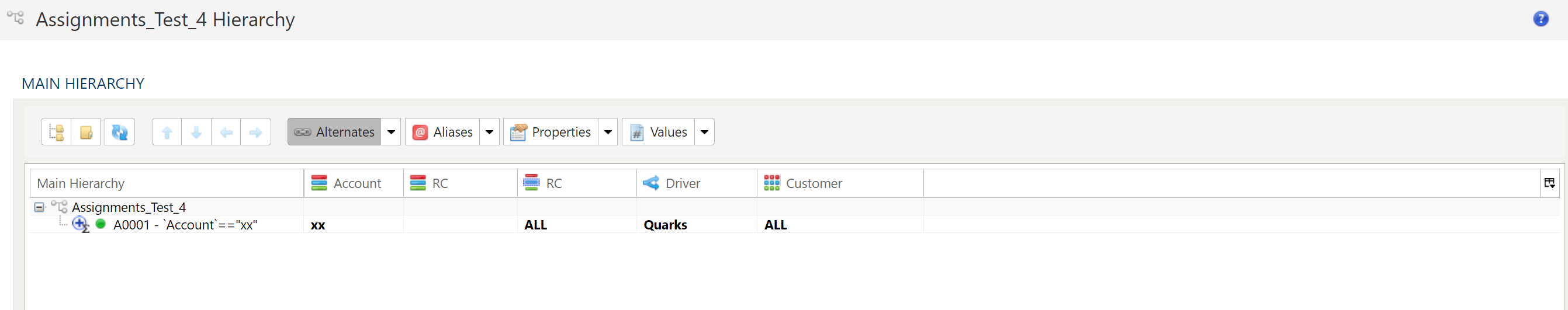

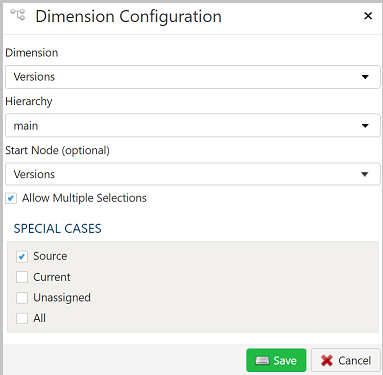

- Specify the Assignment Dimension: Allocations require an assignment dimension, whose purpose is to provide the prescription for how each record or set of records in the preallocated will be assigned. Specify the the assignment dimension in the Assignment Dimension Hierarchy section of the allocation transform.

Key Concepts

The sum of values in an allocated dataset should tie out to those of the pre-allocated source data

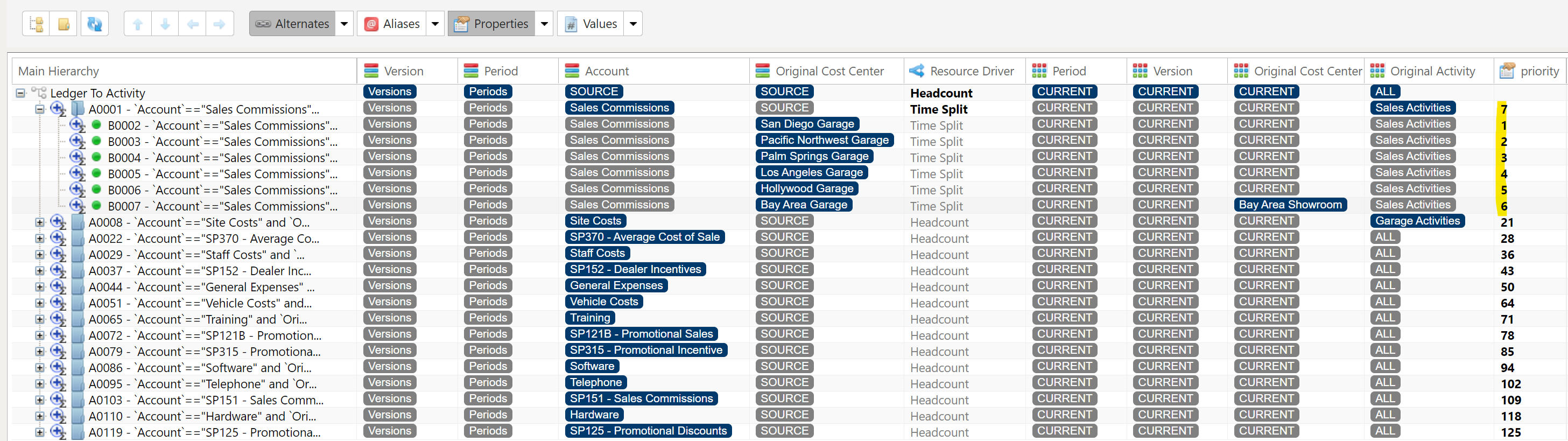

Allocations are accessible in PlaidCloud as a transform option. To set up an allocation, first, set up assignments, and then configure an allocation transform to use the assignments to allocate inbound records using a specified driver table.

Assignments are special dimensions. They are accessed within the Dimensions section of a PlaidCloud Project.

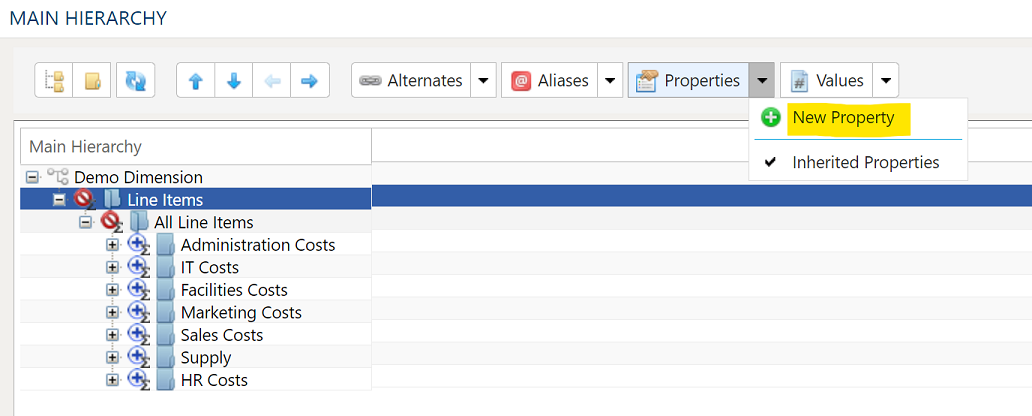

To set up an assignment dimension, perform the following steps:

- From the project screen, Navigate to the Dimensions tab

- Create a new dimension

1.2.2 - Recursive Allocations

Content coming soon...

1.3 - Results and Troubleshooting

1.3.1 - Allocation Results

Content coming soon...

1.3.2 - Troubleshooting Allocations

Stranded Cost

Stranded cost is....

Over Allocation of Cost

Over allocation of cost is when you end up with more output cost...

Incorrect Allocation of Cost

Incorrect allocation of costs happens when...

2 - Custom App Sandbox

2.1 - Getting Started with the Custom App Sandbox

What is the Sandbox

The PlaidCloud Sandbox allows for the deployment of your own custom apps with native local access to data and PlaidCloud operations. The Sandbox environment provides a full compute environment for building custom applications to augment your use of PlaidCloud.

All custom apps run using Kubernetes deployment processes, therefore a basic understanding of Kubernetes objects is necessary. A Hello World example is available to show you how to deploy a simple application.

Available Resources

There is soft resource limit on the Sandbox apps with the expectation that resource usage will not be abused. We can support large amounts of compute if needed but let's discuss before attempting to deploy. Contact us if you expect needing significant resources.

The applications running the in the sandbox will have direct access to the Lakehouse and any number of Postgres databases that you desire. Postgres databases are designed to handle moderate sized data so it is perfect for storing configurations and other meta data. For primary data storage, use the Lakehouse as it will enable storing large amounts of data and remain performant.

All PlaidCloud APIs are also available directly from the Sandbox without using a public URL to help with data transfer speeds.

Image Requirements

Any image that supports a Docker based Kubernetes deployment is suitable for a custom app. Only *nix based images are currently supported. If you have a need to run a Windows based image, please contact us.

Integrate with Your CI/CD Pipeline

The Kubernetes deployment of the Sandbox app utilizes GitOps processes. This allows you to implement your own CI/CD process for image builds and deployments. Your custom app git repo is constantly monitored for changes so as updates are made, your sandbox will be updated.

3 - Dashboards

3.1 - Learning About Dashboards

Description

Dashboards support a wide range of use cases from static reporting to dynamic analysis. Dashboards support complex reporting needs while also providing an intuitive point-and-click interface. There may be times when you run into trouble. A member of the PlaidCloud Support Team is always available to assist you, but we have also compiled some tips below in case you run into a similar problem.

Common Questions and Answers for Dashboard

Preferred Browser

Due to frequent caching, Google Chrome is usually the best web browser to use with Dashboard. If you are using another browser and encounter a problem, we suggest first clearing the cache and cookies to see if that resolves the issue. If not, then we suggest switching to Google Chrome and seeing if the problem recurs.

Sync Delay

- Problem: After unpublishing and publishing tables in the Dashboards area, the data does not appear to be syncing properly.

- Solutions: Refresh the dashboard. Currently, old table data is cached, so it is necessary to refresh the dashboard when rebuilding tables.

Table Sync Error

- Problem: After recreating a table using the same published name as a previous table, the table is not syncing, even after hitting refresh on the dashboard, publishing, unpublishing, and republishing the table.

- Solutions: Republish the table with a different name. The Dashboard data model does not allow for duplicate tables, or tables with the same published name and project ID.

Cache Warning

- Problem: A warning popped up on the upper right saying “Loaded data cached 3 hours ago. Click to force-refresh.”

- Solutions: Click on the warning to force-refresh the cache. You can also click the drop-down menu beside “Edit dashboard” and select “Force refresh dashboard” there. Either of these options will refresh within the system and is preferred to refreshing the web browser itself.

Permission Warning

Problem: My published dashboard is populating with the same error in each section where data should be populated: “This endpoint requires the datasource… permission”

Solutions: Check that the datasources are not old. Most likely, the charts are pulling from outdated material. If this happens, update the charts with new datasources.

Problem: I am getting the same permission warning from above, but my colleague can view the chart data.

Solutions: If the problem is that one individual can see the data in the charts and another cannot, the second person may need to be granted permission by someone within the permitted category. To do so:

- Go to Charts

- Select the second small icon of a pencil and paper next to the chart you want to grant access to

- Click Edit Table

- Click Detail

- Click Owners and add the name of the person you want to grant access to and save.

Saving Modified Filters to Dashboard

- Problem: I modified filters in my draft model and want to save them to my dashboard. The filters are not in the list. In my draft model, a warning stated, “There is no chart definition associated with this component, could it have been deleted? Delete this container and save to remove this message.”

- Solutions: Go to “Edit Chart.” From there, make sure the “Dashboards” section has the correct dashboard filled in. If it is blank, add the correct dashboard name.

Formatting Numbers: Breaks

- Problem: My number formatting is broken and out of order.

- Solutions: The most likely reason for this break is the use of nulls in a numeric column. Using a filter, eliminate all null numeric columns. Try running it again. If that does not work, review the material provided here: http://bl.ocks.org/zanarmstrong/05c1e95bf7aa16c4768e or here: https://github.com/apache-superset/superset-ui/issues. Finally, always feel free to reach out to a PlaidCloud Support team member. This problem is known, and a more permanent solution is being developed.

Formatting Numbers

To round numbers to nearest integer:

- Do not use: ,.0f

- Instead use: ,d or $,d for dollars

Importing Existing Dashboard

- Problem: I’m importing an existing dashboard and getting an error on my export.

- Solutions: First, check whether the dashboard has a “Slug.” To do this, open Edit Dashboard, and the second section is titled Slug. If that section is empty or says “null,” then this is not the problem. Otherwise, if there is any other value in that field, you need to ensure that export JSON has a unique slug value. Change the slug to something unique.

3.2 - Using Dashboards

Description

Usually, members will have access to multiple workspaces and projects. Having this data in multiple spots, however, may not always be desirable. This is why PlaidCloud allows the ability to view all of the accessible data in a single location through the use of dashboards and highly intuitive data exploration. PlaidCloud Dashboards (where the dashboards and data exploration are integrated) provides a rich pallet of visualization and data exploration tools that can operate on virtually any size dataset. This setup also makes it possible to create dashboards and other visualizations that combine information across projects and workspaces, including Ad-hoc analysis.

Editing a Table

The message you receive after creating a new table also directs you to edit the table configuration. While there are more advanced features to edit the configuration, we will start with a limited and more simple portion. To edit table configuration:

- Click on the edit icon of the desired table

- Click the “List Columns” tab

- Arrange the columns as desired

- Click “Save”

This allows you to define the way you want to use specific columns of your table when exploring your data.

- Groupable: If you want users to group metrics by a specific field

- Filterable: If you need to filter on a specific field

- Count Distinct: If you want want to get the distinct count of this field

- Sum: If this is a metric you want to sum

- Min: If this is a metric you want to gather basic summary statistics for

- Max: If this is a metric you want to gather basic summary statistics for

- Is temporal: This should be checked for any date or time fields

Exploring Your Data

To start exploring your data, simply click on the desired table. By default, you’ll be presented with a Table View.

Getting a Data Count

To get a the count of all your records in the table:

Change the filter to “Since”

Enter the desired since filter

- You can use simple phrases such as “3 years ago”

Enter the desired until filter

- The upper limit for time defaults is “now”

Select the “Group By” header

Type “Count” into the metrics section

Select “COUNT(*)”

Click the “Query” button

You should then see your results in the table.

If you want to find the count of a specific field or restriction:

- Type in the desired restriction(s) in the “Group By” field

- Run the query

Restricting Result Number

If you only need a certain number of results, such as the top 10:

- Select “Options”

- Type in the desired max result count in the “Row Limit” section

- Click “Query”

Additional Visualization Tools

To expand abbreviated values to their full length:

- Select “Edit Table Config”

- Click “List Sql Metric”

- Click “Edit Metric”

- Click “D3Format”

To edit the unit of measurement:

- Select “Edit Table Config”

- Click “List Sql Metric”

- Click “Edit Metric”

- Click “SQL Expression”

To change the chart type:

- Scroll to “Chart Options”

- Fill in the required fields

- Click “Query”

From here you are able to set axis labels, margins, ticks, etc.

3.3 - Formatting Numbers and Other Data Types

Formatting numbers and other data types

There are 2 ways of formatting numbers in PlaidCloud. One way is to transform the values in the tables directly, and a second (more common way) is to format them on display so the values don't lose precision in the table and the user can see the values in a cleaner, more appropriate way.

When I display a value on a dashboard, how do I format it the way I want? The core way to display a value is through a chart object on a dashboard. Charts can be Tables, Big Numbers, Bar Charts, and so on. Each chart object may have a slightly different place or means to display the values. For example, in Tables, you can change the format for each column, and for a Big Number, you can change the format of the number.

To change the format, edit the chart and locate the D3 FORMAT or NUMBER FORMAT field. For a Big Number chart, click on the CUSTOMIZE tab, and you will see NUMBER FORMAT. For a Table, click on the CUSTOMIZE tab, select a number column (displayed with a #) in CUSTOMIZE COLUMN and you will see the D3 FORMAT field.

The default value is Adaptive formatting. This will adjust the format based on the values. But if you want to fix it to a format (i.e. $12.23 or 12,345,678), then you select the format you want from the dropdown or manually type a different value (if the field allows).

D3 Formatting - what is it?

D3 Formatting is a structured, formalized means to display data results in a particular format. For example, in certain situations you may wish to display a large value as 3B (3 billion), formatted as .3s in D3 format, or as 3,001,238,383, formatted as ,d. Another common example is the decision to represent dollar values with 2 decimal precision, or to round that to the nearest dollar $,d or $,.2f to show dollar sign, commas, 2 decimal precision, and a fixed point notation.

For a deeper dive into D3, see the following site: GitHub D3

General D3 Format

The general structure of D3 is the following:

[[fill]align][sign][symbol][0][width][,][.precision][~][type]

The fill can be any character (like a period, x or anything else). If you have a fill character, you then have an align character following it, which must be one of the following:

> - Right-aligned within the available space. (Default behavior).

< - Left-aligned within the available space.

^ - Centered within the available space.

= - like >, but with any sign and symbol to the left of any padding.

The sign can be:

- - blank for zero or positive and a minus sign for negative. (Default behavior.)

+ - a plus sign for zero or positive and a minus sign for negative.

( - nothing for zero or positive and parentheses for negative.

(space) - a space for zero or positive and a minus sign for negative.

The symbol can be:

$ - apply currency symbol.

The zero (0) option enables zero-padding; this implicitly sets fill to 0 and align to =.

The width defines the minimum field width; if not specified, then the width will be determined by the content. For example, if you have 8, the width of the field will be 8 characters.

The comma (,) option enables the use commas as separators (i.e. for thousands).

Depending on the type, the precision can either indicate the number of digits that follow the decimal point (types f and %), or the number of significant digits (types , g, r, s and p). If the precision is not specified, it defaults to 6 for all types except (none), which defaults to 12.

The tilde ~ option trims insignificant trailing zeros across all format types. This is most commonly used in conjunction with types r, s and %.

types

| Type | Description |

|---|---|

| f | fixed point notation. (common) |

| d | decimal notation, rounded to integer. (common) |

| % | multiply by 100, and then decimal notation with a percent sign. (common) |

| g | either decimal or exponent notation, rounded to significant digits. |

| r | decimal notation, rounded to significant digits. |

| s | decimal notation with an SI prefix, rounded to significant digits. |

| p | multiply by 100, round to significant digits, and then decimal notation with a percent sign. |

Examples

| Expression | Input | Output | Notes |

|---|---|---|---|

| ,d | 12345.67 | 12,346 | rounds the value to the nearest integer, adds commas |

| ,.2f | 12345.678 | 12,345.68 | Adds commas, 2 decimal, rounds to the nearest integer |

| $,.2f | 12345.67 | $12,345.67 | Adds a $ symbol, has commas, 2 digits after the decimal |

| $,d | 12345.67 | $12,346 | |

| .<10, | 151925 | 151,925... | have periods to the left of the value, 10 characters wide, with commas |

| 0>10 | 12345 | 0000012345 | pad the value with zeroes to the left, 10 characters wide. This works well for fixing the width of a code value |

| ,.2% | 13.215 | 1,321.50% | have commas, 2 digits to the right of a decimal, convert to percentage, and show a % symbol |

| x^+$16,.2f | 123456 | xx+$123,456.00xx | buffer with "x", centered, have a +/- symbol, $ symbol, 16 characters wide, have commas, 2 digit decimal |

3.4 - Example Calculated Columns

Description

Data in dashboards can be augmented with calculated columns. Each dataset will contain a section for calculated columns. Calculated columns can be written and modified with PostgreSQL-flavored SQL.

Navigating to a dataset

In order to view and edit metrics and calculated expressions, perform the following steps:

- Sign into plaidcloud.com and navigate to dashboards

- From within visualize.plaidcloud.com, navigate to Data > Datasets

- Search for a dataset to view or modify

- Modify the dataset by hovering over the

editbutton beneathActions

Examples

count

COUNT(*)

min

min("MyColumnName")

max

max("MyColumnName")

coalesce (useful for converting nulls to 0.0, for instance)

coalesce("BaselineCost",0.0)

substring

substring("PERIOD",6,2)

cast

CAST("YEAR" AS integer)-1

concat

concat("Biller Entity" , ' ', "Country_biller")

to_char

to_char("date_created", 'YYYY-mm-dd')

left

left("period",4)

divide

divide, with a hack for avoiding DIV/0 errors

sum("so_infull")/(count(*)+0.00001)

conditional statement

CASE WHEN "Field_A"= 'Foo' THEN max(coalesce("Value_A",0.0)) - max(coalesce("Value_B",0.0)) END

CASE WHEN "sol_otif_pod_missing" = 1 THEN

'POD is missing.'

ELSE

'POD exists.'

END

case when "Customer DC" = "origin_dc" or "order_reason_type" = 'Off Schedule' or "mot_type" = 'UPS' then

'Yes'

else

'No'

end

CASE WHEN "module_type" is NULL THEN '---' ELSE "module_type" END

CASE WHEN "NODE_TYPE" = 'External' THEN '3rd Party' ELSE "ENTITY_LOCATION_DESCRIPTION" END

concatenate

concat("Class",' > ',"Product Family",' > ',"Meta Series")

3.5 - Example Metrics

Description

Data in dashboards can be augmented with metrics. Each dataset will contain a section for Metrics. Metrics can be written and modified with PostgreSQL-flavored SQL.

Navigating to a dataset

In order to view and edit metrics and calculated expressions, perform the following steps:

- Sign into plaidcloud.com and navigate to dashboards

- From within visualize.plaidcloud.com, navigate to Data > Datasets

- Search for a dataset to view or modify

- Modify the dataset by hovering over the

editbutton beneathActions

Examples

Calculated columns are typically additional columns made by combining logic and existing columns.

convert a date to text

to_char("week_ending_sol_del_req", 'YYYY-mm-dd')

various SUM examples

SUM(Value)

SUM(-1*"value_usd_mkp") / (0.0001+SUM(-1*"value_usd_base"))

(SUM("Value_USD_VAT")/SUM("Value_USD_HEADER"))*100

sum(delivery_cases) where Material_Type = Gloves

sum("total_cost") / sum("delivery_count")

various case examples

CASE WHEN

SUM("distance_dc_xd") = 0 THEN 0

ELSE

sum("XD")/sum("distance_dc_xd")

END

sum(CASE

WHEN "FUNCTION" = 'OM' THEN "VALUE__FC"

ELSE 0.0

END)

count

count(*)

First and Cast

public.first(cast("PRETAX_SEQ" AS NUMERIC))

Round

round(Sum("GROSS PROFIT"),0)

Concat

concat("GCOA","CC Code")

4 - Data and Service Connectors

4.1 - Cloud Service Connections

PlaidCloud provides a direct service connections for services that don't use REST or JSON-RPC requests.

The individual service guides will help provide the specific setup necessary to connect.

4.1.1 - Quandl Connector

Connection Documentation

Quandl is now Nasdaq Data Link. The documentation is here.

Security Requirements

Documentation under development

Obtain Credentials

Documentation under development

Create Service Connection

Documentation under development

4.2 - Database and Data Lake Connections

PlaidCloud enables connection directly to databases, data lakes, query engines, and lakehouses. Connections can also utilize a PlaidLink agent if services are behind a firewall.

Since the terms of database, lakehouse, query engine, and potentially others are used to refer to data that can be queried using a connection, we generally treat all of these as "Databases" despite a wide variety of underlying technology that performs the underlying query.

4.2.1 - Amazon Athena

Upstream Documentation

Amazon Athena documentation is here.

Security Requirements

Documentation under development

Obtain Credentials

Documentation under development

Create Database Connector

Documentation under development

4.2.2 - Amazon Redshift

Upstream Documentation

Amazon Redshift has several guides related to use located here.

Security Requirements

Documentation under development

Obtain Credentials

Documentation under development

Create Database Connector

Documentation under development

4.2.3 - Apache Doris

Upstream Documentation

Apache Doris documentation is here.

The Apache project homepage for Apache Doris is here.

Security Requirements

Documentation under development

Obtain Credentials

Documentation under development

Create Database Connector

Documentation under development

4.2.4 - Apache Hive

Upstream Documentation

Apache Hive documentation is located here.

Security Requirements

Documentation under development

Obtain Credentials

Documentation under development

Create Data Lake Connector

Documentation under development

4.2.5 - Apache Spark

Upstream Documentation

The Apache Spark documentation is here.

The Apache project is here.

Security Requirements

Documentation under development

Obtain Credentials

Documentation under development

Create Database Connector

Documentation under development

4.2.6 - Azure Databricks

Upstream Documentation

Azure Databricks documentation is here.

Security Requirements

Documentation under development

Obtain Credentials

In order to obtain the connection credentials necessary for PlaidCloud to communicate with a Databricks warehouse, follow the steps below:

- Open the Databricks console

- Under the User Settings in the upper right, select "Settings"

- Navigate to the "Developers" section

- Generate an Access Token with a sufficient lifespan specified

- Navigate to the "SQL Warehouses" area

- Select the warehouse required for connecting

- Capture the connection details including host, and http path

- Navigate to the warehouse data area

- Capture the initial catalog and initial schema information

With the information above, the connection form can be completed and tested with the Databricks warehouse

Create Database Connector

Documentation under development

4.2.7 - Databend

Upstream Documentation

Databend documentation is here.

Security Requirements

Documentation under development

Obtain Credentials

Documentation under development

Create Database Connector

Documentation under development

4.2.8 - Exasol

Upstream Documentation

Exasol documentation is here.

Security Requirements

Documentation under development

Obtain Credentials

Documentation under development

Create Database Connector

Documentation under development

4.2.9 - Greenplum

Upstream Documentation

The Greenplum documentation is located here.

Security Requirements

Documentation under development

Obtain Credentials

Documentation under development

Create Database Connector

Documentation under development

4.2.10 - IBM DB2

Upstream Documentation

The IBM DB2 documentation is located here.

Security Requirements

Documentation under development

Obtain Credentials

Documentation under development

Create Database Connector

Documentation under development

4.2.11 - IBM Informix

Upstream Documentation

IBM Informix documentation is located here.

Security Requirements

Documentation under development

Obtain Credentials

Documentation under development

Create Database Connector

Documentation under development

4.2.12 - Microsoft Fabric

Upstream Documentation

The Microsoft Fabric documentation is located here.

Security Requirements

Documentation under development

Obtain Credentials

Documentation under development

Create Database Connector

Documentation under development

4.2.13 - Microsoft SQL Server

Upstream Documentation

Microsoft SQL Server documentation is located here.

Security Requirements

Documentation under development

Obtain Credentials

Documentation under development

Create Database Connector

Documentation under development

4.2.14 - MySQL

Upstream Documentation

MySQL documentation is located here.

Security Requirements

Documentation under development

Obtain Credentials

Documentation under development

Create Database Connector

Documentation under development

4.2.15 - ODBC

Upstream Documentation

Using the ODBC connector will require configuration specific to the database. While ODBC is a generic connection type, each database may implement some specific configurations. Please refer to the ODBC documentation for the target database.

Security Requirements

Documentation under development

Obtain Credentials

Documentation under development

Create Database Connector

Documentation under development

4.2.16 - Oracle

Upstream Documentation

The Oracle database documentation is located here.

Security Requirements

Documentation under development

Obtain Credentials

Documentation under development

Create Database Connector

Documentation under development

4.2.17 - PlaidCloud Lakehouse

Upstream Documentation

There is very little configuration necessary for using the built-in PlaidCloud Lakehouse. The documentation for the service is here.

Security Requirements

Documentation under development

Obtain Credentials

Documentation under development

Create Lakehouse Connector

Documentation under development

4.2.18 - PostgreSQL

Upstream Documentation

PostreSQL documentation is located here

Security Requirements

Documentation under development

Obtain Credentials

Documentation under development

Create Database Connector

Documentation under development

4.2.19 - Presto

Upstream Documentation

The Presto documentation is here.

Security Requirements

Documentation under development

Obtain Credentials

Documentation under development

Create Database Connector

Documentation under development

4.2.20 - SAP HANA

Upstream Documentation

The SAP HANA documentation is here.

Security Requirements

Documentation under development

Obtain Credentials

Documentation under development

Create Database Connector

Documentation under development

4.2.21 - Snowflake

Upstream Documentation

The Snowflake documentation is here.

Security Requirements

Documentation under development

Obtain Credentials

Documentation under development

Create Database Connector

Documentation under development

4.2.22 - StarRocks

Upstream Documentation

StarRocks documentation is here.

Security Requirements

Documentation under development

Obtain Credentials

Documentation under development

Create Database Connector

Documentation under development

4.2.23 - Trino

Upstream Documentation

The Trino documentation is here.

Security Requirements

Documentation under development

Obtain Credentials

Documentation under development

Create Database Connector

Documentation under development

4.3 - ERP System Connections

PlaidCloud provides a direct connections for ERP systems.

The individual service guides will help provide the specific setup necessary to connect.

4.3.1 - Infor Connector

Upstream Documentation

The Infor documentation is here.

Security Requirements

Documentation under development

Obtain Credentials

Documentation under development

Create ERP Connection

Documentation under development

4.3.2 - JD Edwards (Legacy) Connector

Upstream Documentation

The JDE documentation is here.

Security Requirements

Documentation under development

Obtain Credentials

Documentation under development

Create ERP Connection

Documentation under development

4.3.3 - Oracle EBS Connector

Upstream Documentation

The Oracle EBS documentation is here.

Security Requirements

Documentation under development

Obtain Credentials

Oracle EBS utilizes the standard Oracle database connection. This connection provides the connectivity to query, load, and execute PL/SQL programs in Oracle.

If the EBS instance has the REST API interface available, this can be accessed using the same approach as Oracle Cloud REST connection too.

Create ERP Connection

Documentation under development

4.3.4 - Oracle Fusion Connector

Upstream Documentation

The Oracle Fusion applications documentation is here.

Security Requirements

Documentation under development

Obtain Credentials

Documentation under development

Create ERP Connection

Documentation under development

4.3.5 - SAP Analytics Cloud Connector

Upstream Documentation

The SAP Analytics Cloud documentation is here.

Security Requirements

Documentation under development

Obtain Credentials

Documentation under development

Create SAC Connection

Documentation under development

4.3.6 - SAP ECC Connector

Upstream Documentation

SAP has removed all ECC documentation and currently only provides documentation for S/4HANA.

Security Requirements

Documentation under development

Obtain Credentials

Documentation under development

Create ERP Connection

Documentation under development

4.3.7 - SAP Profitability and Cost Management (PCM) Connector

Upstream Documentation

The SAP PCM legacy documentation is here.

Security Requirements

Documentation under development

Obtain Credentials

Documentation under development

Create PCM Connection

Documentation under development

4.3.8 - SAP Profitability and Performance Management (PaPM) Connector

Upstream Documentation

The SAP PaPM documentation is here.

Security Requirements

Documentation under development

Obtain Credentials

Documentation under development

Create PaPM Connection

Documentation under development

4.3.9 - SAP S/4HANA Connector

Upstream Documentation

The documentation for SAP S/4HANA is here.

Security Requirements

Documentation under development

Obtain Credentials

Documentation under development

Create ERP Connection

Documentation under development

4.4 - Git Repository Connections

PlaidCloud provides a direct connections for Git repositories.

The individual service guides will help provide the specific setup necessary to connect.

4.4.1 - AWS CodeCommit Repository Connector

Service Documentation

The AWS CodeCommit service documentation is here.

Security Requirements

Documentation under development

Obtain Credentials

Documentation under development

Create Git Connection

Documentation under development

4.4.2 - Azure Repos Repository Connector

Service Documentation

The Azure Repos service documentation is here.

Security Requirements

Documentation under development

Obtain Credentials

Documentation under development

Create Git Connection

Documentation under development

4.4.3 - BitBucket Repository Connector

Service Documentation

The BitBucket service documentation is here.

Security Requirements

Documentation under development

Obtain Credentials

Documentation under development

Create Git Connection

Documentation under development

4.4.4 - GitHub Repository Connector

Service Documentation

The GitHub service documentation is here.

Security Requirements

Documentation under development

Obtain Credentials

Documentation under development

Create Git Connection

Documentation under development

4.4.5 - GitLab Repository Connector

Service Documentation

The GitLab service documentation is here.

Security Requirements

Documentation under development

Obtain Credentials

Documentation under development

Create Git Connection

Documentation under development

4.5 - Google Service Connections

PlaidCloud provides a direct connections for Google services.

The individual service guides will help provide the specific setup necessary to connect.

4.5.1 - Google BigQuery Connector

Connection Documentation

The Google BigQuery documentation is here.

Security Requirements

Documentation under development

Obtain Credentials

Documentation under development

Create Query Connection

Documentation under development

4.5.2 - Google Sheets

Connection Documentation

Google Sheets is oriented more towards consumers. For technical documentation, refer to the developer documentation here.

Security Requirements

Documentation under development

Obtain Credentials

Documentation under development

Create Spreadsheet Connection

Documentation under development

4.6 - Open Table Format Connections

PlaidCloud provides a direct connections for Open Table Formats for use with the PlaidCloud Lakehouse service. This allows for hybrid query execution without moving data.

The individual service guides will help provide the specific setup necessary to connect.

4.6.1 - Apache Hive Open Table Format

Catalog Documentation

Apache Hive documentation is here.

Security Requirements

Documentation under development

Obtain Credentials

Documentation under development

Create Catalog Connection

Documentation under development

4.6.2 - Apache Hudi Open Table Format

Catalog Documentation

Apache Hudi documentation is here.

Security Requirements

Documentation under development

Obtain Credentials

Documentation under development

Create Catalog Connection

Documentation under development

4.6.3 - Apache Iceberg Open Table Format

Catalog Documentation

Apache Iceberg documentation is here.

Security Requirements

Documentation under development

Obtain Credentials

Documentation under development

Create Catalog Connection

Documentation under development

4.6.4 - Delta Lake Open Table Format (Databricks Catalog)

Catalog Documentation

The Delta Lake documentation is here.

Security Requirements

Documentation under development

Obtain Credentials

Documentation under development

Create Catalog Connection

Documentation under development

4.7 - REST Connections

PlaidCloud provides a comprehensive way to connect to any REST service using standard authentication processes. However, it is quite common that REST based services have nuanced differences in how authentication takes place and the parameters needed for a successful handshake.

The individual service guides will help provide the specific setup necessary to connect.

In addition to the specific REST connectors, PlaidCloud also provides a generic REST connector that can be configured to connect and process responses from other REST services.

4.7.1 - Gusto REST Connector

API Documentation

The API documentation is for this connector is located here.

Security Requirements

Documentation under development

Obtain Credentials

Documentation under development

Create REST Connector

Documentation under development

4.7.2 - Microsoft Dynamics 365 REST Connector

API Documentation

The API documentation is for this connector is located here.

Security Requirements

Documentation under development

Obtain Credentials

Documentation under development

Create REST Connector

Documentation under development

4.7.3 - Mulesoft REST Connector

API Documentation

The API documentation is for this connector is determined by the service endpoints for which Mulesoft is handling.

Security Requirements

Documentation under development

Obtain Credentials

Documentation under development

Create REST Connector

Documentation under development

4.7.4 - Netsuite REST Connector

API Documentation

The API documentation is for this connector is located here.

Security Requirements

Documentation under development

Obtain Credentials

Documentation under development

Create REST Connector

Documentation under development

4.7.5 - Paycor REST Connector

API Documentation

The API documentation is for this connector is located here.

Paycor Setup

The Paycor API Application and Initiation process is a little more involved than other REST providers. Please be sure to go through the steps outlined on their Quick Start Page

Key values you must capture are:

- Application OAuth Client ID

- Application OAuth Client Secret

- APIm Subscription Key

- Scope Key of

currentapplication version

Activate it here, choosing Production or Sandbox depending on your need:

| Environment | Activation Form URL |

|---|---|

| Sandbox | https://hcm-demo.paycor.com/AppActivation/ClientActivation |

| Production | https://hcm.paycor.com/AppActivation/ClientActivation |

Security Requirements

Documentation under development

Obtain Credentials

Documentation under development

Create REST Connector

Documentation under development

4.7.6 - Quickbooks REST Connector

API Documentation

The API documentation is for this connector is located here.

Security Requirements

Documentation under development

Obtain Credentials

Documentation under development

Create REST Connector

Documentation under development

4.7.7 - Ramp REST Connector

API Documentation

The API documentation is for this connector is located here.

Security Requirements

Documentation under development

Obtain Credentials

Documentation under development

Create REST Connector

Documentation under development

4.7.8 - Sage Intacct REST Connector

API Documentation

The API documentation is for this connector is located here.

Security Requirements

Documentation under development

Obtain Credentials

Documentation under development

Create REST Connector

Documentation under development

4.7.9 - Salesforce REST Connector

API Documentation

The API documentation is for this connector is located here.

Security Requirements

Documentation under development

Obtain Credentials

Documentation under development

Create REST Connector

Documentation under development

4.7.10 - Stripe REST Connector

API Documentation

The API documentation is for this connector is located here.

Security Requirements

Documentation under development

Obtain Credentials

Documentation under development

Create REST Connector

Documentation under development

4.7.11 - Workday REST Connector

API Documentation

The API documentation is for this connector is located here.

Security Requirements

Documentation under development

Obtain Credentials

Documentation under development

Create REST Connector

Documentation under development

4.8 - Team Collaboration Connections

PlaidCloud provides a direct connections for team collaboration services.

The individual service guides will help provide the specific setup necessary to connect.

4.8.1 - Microsoft Teams Connector

Connection Documentation

Microsoft Teams Admin documentation is here.

Security Requirements

Documentation under development

Obtain Credentials

Documentation under development

Create Teams Connection

Documentation under development

4.8.2 - Slack Connector

Connection Documentation

Slack Admin documentation is here.

Security Requirements

Documentation under development

Obtain Credentials

Documentation under development

Create Slack Connection

Documentation under development

5 - Data Lakehouse Service

5.1 - Getting Started

About

The PlaidCloud Data Lakehouse Service (DLS) stands on the shoulders of great technology. The service is based on Databend, a lakehouse suitable for big data analytics and traditional data warehouse operations while supporting vast storage as a data lake. It's extensive analytical optimizations, array of indexing types, high compression, and native time travel capabilities makes it ideal for wide array of uses.

The PlaidCloud DLS also has the ability to integrate with existing data lakes on Apache Hive, Apache Iceberg, and Delta Lake. This allows for accessing vast amounts of already stored data using a modern and fast query engine without having to move any data.

The PlaidCloud DLS continues our goal of providing the best open source options for our customers to eliminate lock-in while also providing services as turn-key solutions.

Managing, upgrading, and maintaining a data lakehouse requires special skills and investment. Both can be hard to find when you need them. The PlaidCloud service eliminates that need while still providing deep technical access for those that need or want total control.

Key Benefits

Always on

The PlaidCloud DLS provides always-on query access. You don't have to schedule availability or incur additional costs for usage outside the expected time.

This also means there is no first-query delay and no cache to warm up before optimal performance is achieved.

Read and Write the way you expect

The PlaidCloud DLS operates like a traditional database so you don't have to decide which instances are read-only or have special processes to load data from a write instance. All instances support full read and write with no special ETL or data loading processes required.

If you are used to using traditional databases, you don't need to learn any new skills or change your applications. The DLS is a drop-in replacement for ANSI SQL compliant databases. If you are coming from other databases such as Oracle, MySQL or Microsoft SQL Server then some adjustments to your query logic may be necessary but not to the overall process.

Since SAP HANA and Amazon Redshift use the PostgreSQL dialect, those seeking a portable alternative will find PlaidCloud DLS a straightforward option.

Economical

With usage based billing, you only pay for what you use. There are no per-query or extra processing charges. Triple redundant storage, incredible IOPS, wide data throughput, time travel queries, and out-of-band backups are all standard at a reasonable price.

We eliminate the headache of having to choose different data warehousing tiers based on optimizing storage costs. We offer the ability to select how long each table's history is kept live for time travel queries and recovery.

Zero (0) days of time travel creates a transient table that will have no time travel or recovery. This is suitable for intermediate tables or tables that can be reproduced from other data.

You can set tables to have from one (1) to ninety (90) days of time travel. During the time travel window you can issue queries to view data at different snapshots or periods along with recovery a table at a point-in-time to a new table. This is an incredibly powerful capability that surpasses traditional backups because the historical state of a table can be viewed with a simple query rather than having to recover a backup.

Highly performant

We employ multiple caching strategies to ensure peak performance.

We also extensively tested optimal compute, networking, and RAM configurations to achieve maximum performance. As new technology and capabilities become available, our goal is to incorporate features that increase performance.

Scale out and scale up capable

The ability to both scale up and scale out are essential for a data lakehouse, especially when it is performing analytical processes.

Scaling up means more simultaneous queries can occur at once. This is useful if you have many users or applications that require many concurrent processes.

Scaling out means more compute power can be applied to each query by breaking the data processing up across many CPUs. This is useful on large data where summarizations or other analytical processes such as machine learning, AI, or geospatial analysis is required.

The PlaidCloud DLS allows scale expansion either on-demand or based on pre-defined events/metrics.

Integrated with PlaidCloud Analyze for Low/No Code operations

Analyze, Dashboards, Forms, PlaidXL, and JupyterLab are quickly connected to any PlaidCloud DLS. This provides point-and-click operations to automate data related activities as well as building beautiful visualizations for reporting and insightful analysis.

From an Analyze project, you can select any DLS instance. This also provides the ability for Analyze projects to switch among DLS instances to facilitate testing and Blue/Green upgrade processes. It also allows quickly restoring an Analyze Project from a DLS point-in-time backup.

Clone

Making a clone of an existing lakehouse performs a complete copy of the source lakehouse. When a clone is made it has nothing shared with the original lakehouse and therefore is a quick way to isolate a complete lakehouse for testing or even a live archive at a specific point in time.

Another important feature is that you can clone a lakehouse to a different data center. This might be desireable if global usage shifts from one region to another or having a copy of a warehouse in various regions for development/testing improves internal processes.

Web or Desktop SQL Client Access

A web SQL console is provided within PlaidCloud. It is a full featured SQL client so it supports most use cases. However, for more advanced use cases, a desktop client or other service may be desired. The PlaidCloud DLS uses standard security and access controls enabling remote connections and controlled user permissions.

Access options allow quick and easy start-up as well as ongoing query and analytics access. A firewall allows control over external access.

DBeaver provides a nice free desktop option that has a Greenplum driver to fully support PlaidCloud DWS instances. They also provide a commercial version called DBeaver Pro for those that require/prefer use of licensed software.

5.2 - Pricing

Usage Based

The cost of a PlaidCloud Data Lakehouse instance is determined by a limited number of factors that you control. All costs incurred are usage based.

The factors that impact cost are:

- Concurrency Factor - The size of each compute node in your warehouse instance

- Parallelism Factor - The number of nodes in your warehouse instance

- Allocated Storage - The number of Gigabytes of storage consumed by your warehouse instance

- Network Egress - The number of Gigabytes of network egress. Excludes traffic to PlaidCloud applications within the same region. Ingress is always free.

- Time Travel Period - How many days, weeks, or months to retain time travel history on tables

Storage, backups, and network egress are calculated in gigabytes (GB), where 1 GB is 2^30 bytes. This unit of measurement is also known as a gibibyte (GiB).

All prices are in USD. If you are paying in another currency please convert to your currency using the appropriate rate.

Billing is on an hourly basis. The monthly prices shown are illustrative based on a 730 hour month.

Controlling Factors

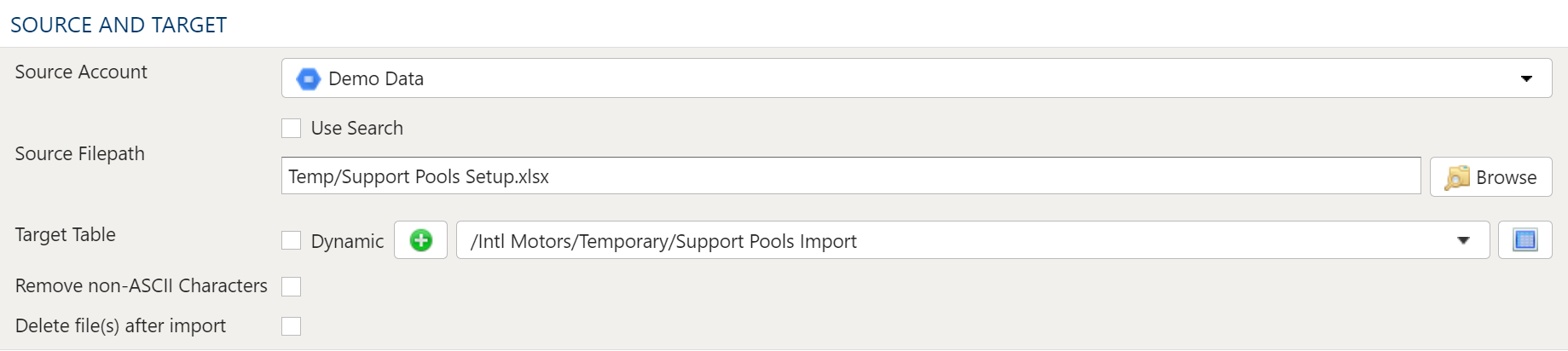

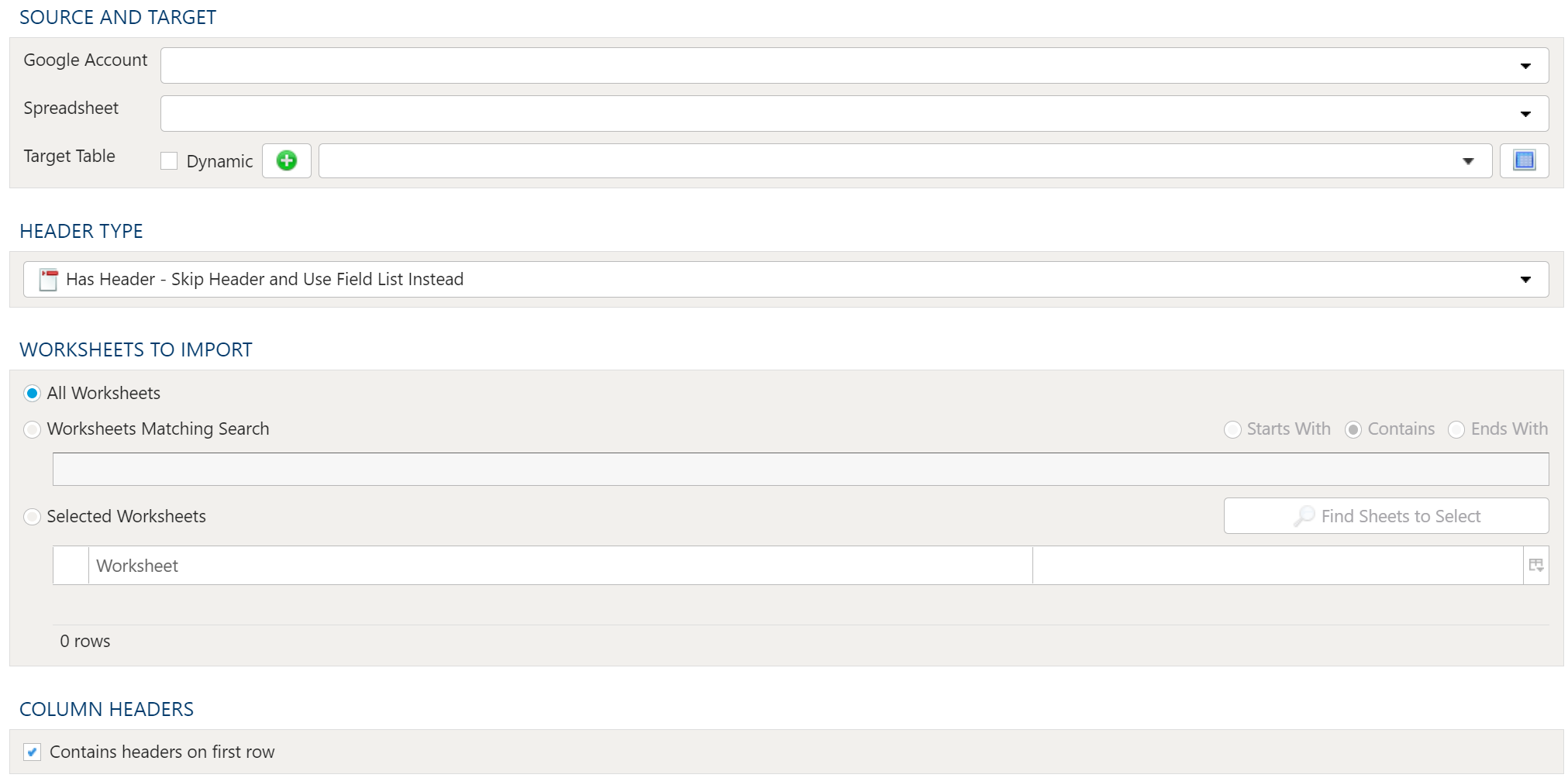

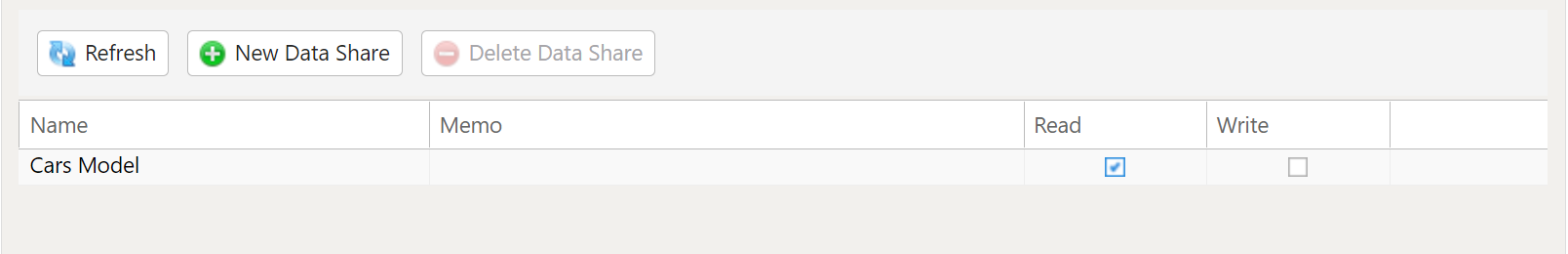

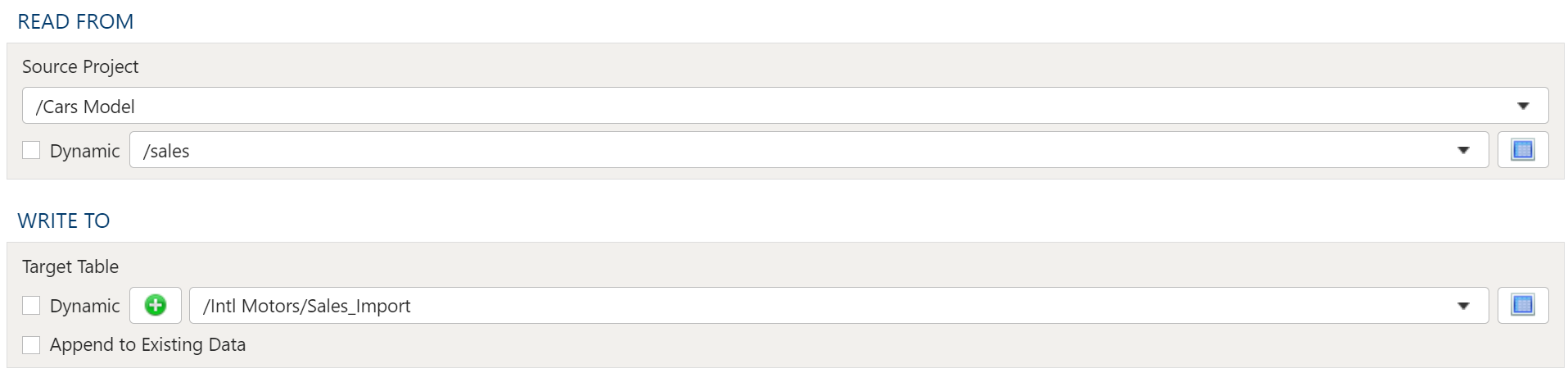

Concurrency Factor